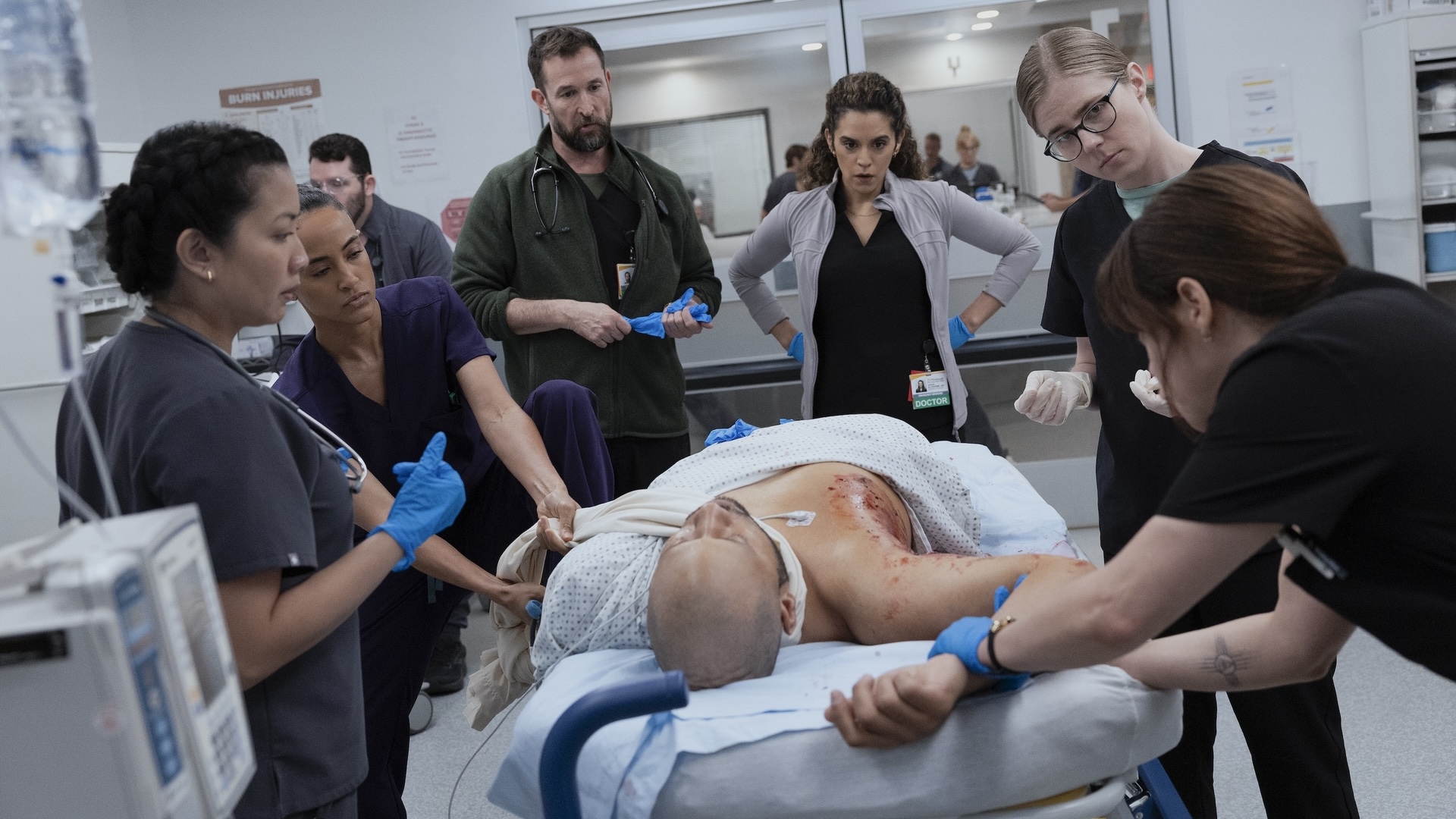

Picture this: You’re in a meeting room at your tech company, and two people are having what looks like the same conversation about the same design problem. One is talking about whether the team has the right skills to tackle it. The other is diving deep into whether the solution actually solves the user’s problem. Same room, same problem, completely different lenses.

This is the beautiful, sometimes messy reality of having both a Design Manager and a Lead Designer on the same team. And if you’re wondering how to make this work without creating confusion, overlap, or the dreaded “too many cooks” scenario, you’re asking the right question.

The traditional answer has been to draw clean lines on an org chart. The Design Manager handles people, the Lead Designer handles craft. Problem solved, right? Except clean org charts are fantasy. In reality, both roles care deeply about team health, design quality, and shipping great work.

The magic happens when you embrace the overlap instead of fighting it—when you start thinking of your design org as a design organism.

The Anatomy of a Healthy Design Team

Here’s what I’ve learned from years of being on both sides of this equation: think of your design team as a living organism. The Design Manager tends to the mind (the psychological safety, the career growth, the team dynamics). The Lead Designer tends to the body (the craft skills, the design standards, the hands-on work that ships to users).

But just like mind and body aren’t completely separate systems, so, too, do these roles overlap in important ways. You can’t have a healthy person without both working in harmony. The trick is knowing where those overlaps are and how to navigate them gracefully.

When we look at how healthy teams actually function, three critical systems emerge. Each requires both roles to work together, but with one taking primary responsibility for keeping that system strong.

The Nervous System: People & Psychology

Primary caretaker: Design Manager

Supporting role: Lead Designer

The nervous system is all about signals, feedback, and psychological safety. When this system is healthy, information flows freely, people feel safe to take risks, and the team can adapt quickly to new challenges.

The Design Manager is the primary caretaker here. They’re monitoring the team’s psychological pulse, ensuring feedback loops are healthy, and creating the conditions for people to grow. They’re hosting career conversations, managing workload, and making sure no one burns out.

But the Lead Designer plays a crucial supporting role. They’re providing sensory input about craft development needs, spotting when someone’s design skills are stagnating, and helping identify growth opportunities that the Design Manager might miss.

Design Manager tends to:

- Career conversations and growth planning

- Team psychological safety and dynamics

- Workload management and resource allocation

- Performance reviews and feedback systems

- Creating learning opportunities

Lead Designer supports by:

- Providing craft-specific feedback on team member development

- Identifying design skill gaps and growth opportunities

- Offering design mentorship and guidance

- Signaling when team members are ready for more complex challenges

The Muscular System: Craft & Execution

Primary caretaker: Lead Designer

Supporting role: Design Manager

The muscular system is about strength, coordination, and skill development. When this system is healthy, the team can execute complex design work with precision, maintain consistent quality, and adapt their craft to new challenges.

The Lead Designer is the primary caretaker here. They’re setting design standards, providing craft coaching, and ensuring that shipping work meets the quality bar. They’re the ones who can tell you if a design decision is sound or if we’re solving the right problem.

But the Design Manager plays a crucial supporting role. They’re ensuring the team has the resources and support to do their best craft work, like proper nutrition and recovery time for an athlete.

Lead Designer tends to:

- Definition of design standards and system usage

- Feedback on what design work meets the standard

- Experience direction for the product

- Design decisions and product-wide alignment

- Innovation and craft advancement

Design Manager supports by:

- Ensuring design standards are understood and adopted across the team

- Confirming experience direction is being followed

- Supporting practices and systems that scale without bottlenecking

- Facilitating design alignment across teams

- Providing resources and removing obstacles to great craft work

The Circulatory System: Strategy & Flow

Shared caretakers: Both Design Manager and Lead Designer

The circulatory system is about how information, decisions, and energy flow through the team. When this system is healthy, strategic direction is clear, priorities are aligned, and the team can respond quickly to new opportunities or challenges.

This is where true partnership happens. Both roles are responsible for keeping the circulation strong, but they’re bringing different perspectives to the table.

Lead Designer contributes:

- User needs are met by the product

- Overall product quality and experience

- Strategic design initiatives

- Research-based user needs for each initiative

Design Manager contributes:

- Communication to team and stakeholders

- Stakeholder management and alignment

- Cross-functional team accountability

- Strategic business initiatives

Both collaborate on:

- Co-creation of strategy with leadership

- Team goals and prioritization approach

- Organizational structure decisions

- Success measures and frameworks

Keeping the Organism Healthy

The key to making this partnership sing is understanding that all three systems need to work together. A team with great craft skills but poor psychological safety will burn out. A team with great culture but weak craft execution will ship mediocre work. A team with both but poor strategic circulation will work hard on the wrong things.

Be Explicit About Which System You’re Tending

When you’re in a meeting about a design problem, it helps to acknowledge which system you’re primarily focused on. “I’m thinking about this from a team capacity perspective” (nervous system) or “I’m looking at this through the lens of user needs” (muscular system) gives everyone context for your input.

This isn’t about staying in your lane. It’s about being transparent as to which lens you’re using, so the other person knows how to best add their perspective.

Create Healthy Feedback Loops

The most successful partnerships I’ve seen establish clear feedback loops between the systems:

Nervous system signals to muscular system: “The team is struggling with confidence in their design skills” → Lead Designer provides more craft coaching and clearer standards.

Muscular system signals to nervous system: “The team’s craft skills are advancing faster than their project complexity” → Design Manager finds more challenging growth opportunities.

Both systems signal to circulatory system: “We’re seeing patterns in team health and craft development that suggest we need to adjust our strategic priorities.”

Handle Handoffs Gracefully

The most critical moments in this partnership are when something moves from one system to another. This might be when a design standard (muscular system) needs to be rolled out across the team (nervous system), or when a strategic initiative (circulatory system) needs specific craft execution (muscular system).

Make these transitions explicit. “I’ve defined the new component standards. Can you help me think through how to get the team up to speed?” or “We’ve agreed on this strategic direction. I’m going to focus on the specific user experience approach from here.”

Stay Curious, Not Territorial

The Design Manager who never thinks about craft, or the Lead Designer who never considers team dynamics, is like a doctor who only looks at one body system. Great design leadership requires both people to care about the whole organism, even when they’re not the primary caretaker.

This means asking questions rather than making assumptions. “What do you think about the team’s craft development in this area?” or “How do you see this impacting team morale and workload?” keeps both perspectives active in every decision.

When the Organism Gets Sick

Even with clear roles, this partnership can go sideways. Here are the most common failure modes I’ve seen:

System Isolation

The Design Manager focuses only on the nervous system and ignores craft development. The Lead Designer focuses only on the muscular system and ignores team dynamics. Both people retreat to their comfort zones and stop collaborating.

The symptoms: Team members get mixed messages, work quality suffers, morale drops.

The treatment: Reconnect around shared outcomes. What are you both trying to achieve? Usually it’s great design work that ships on time from a healthy team. Figure out how both systems serve that goal.

Poor Circulation

Strategic direction is unclear, priorities keep shifting, and neither role is taking responsibility for keeping information flowing.

The symptoms: Team members are confused about priorities, work gets duplicated or dropped, deadlines are missed.

The treatment: Explicitly assign responsibility for circulation. Who’s communicating what to whom? How often? What’s the feedback loop?

Autoimmune Response

One person feels threatened by the other’s expertise. The Design Manager thinks the Lead Designer is undermining their authority. The Lead Designer thinks the Design Manager doesn’t understand craft.

The symptoms: Defensive behavior, territorial disputes, team members caught in the middle.

The treatment: Remember that you’re both caretakers of the same organism. When one system fails, the whole team suffers. When both systems are healthy, the team thrives.

The Payoff

Yes, this model requires more communication. Yes, it requires both people to be secure enough to share responsibility for team health. But the payoff is worth it: better decisions, stronger teams, and design work that’s both excellent and sustainable.

When both roles are healthy and working well together, you get the best of both worlds: deep craft expertise and strong people leadership. When one person is out sick, on vacation, or overwhelmed, the other can help maintain the team’s health. When a decision requires both the people perspective and the craft perspective, you’ve got both right there in the room.

Most importantly, the framework scales. As your team grows, you can apply the same system thinking to new challenges. Need to launch a design system? Lead Designer tends to the muscular system (standards and implementation), Design Manager tends to the nervous system (team adoption and change management), and both tend to circulation (communication and stakeholder alignment).

The Bottom Line

The relationship between a Design Manager and Lead Designer isn’t about dividing territories. It’s about multiplying impact. When both roles understand they’re tending to different aspects of the same healthy organism, magic happens.

The mind and body work together. The team gets both the strategic thinking and the craft excellence they need. And most importantly, the work that ships to users benefits from both perspectives.

So the next time you’re in that meeting room, wondering why two people are talking about the same problem from different angles, remember: you’re watching shared leadership in action. And if it’s working well, both the mind and body of your design team are getting stronger.