For fans of Star Wars, the name George Lucas will generally refer to distant galaxies. However, Lucas has officially left the giant space opera media company more than ten years after selling Lucasfilm to Disney for$ 4.05 billion. He’s unconcerned. pumped up. content. in his style. stifled As the children may say, flourishing. ]… ]

Post-Star War, George Lucas’s story” I’ve Got a Life,” primary appeared on Den of Geek.

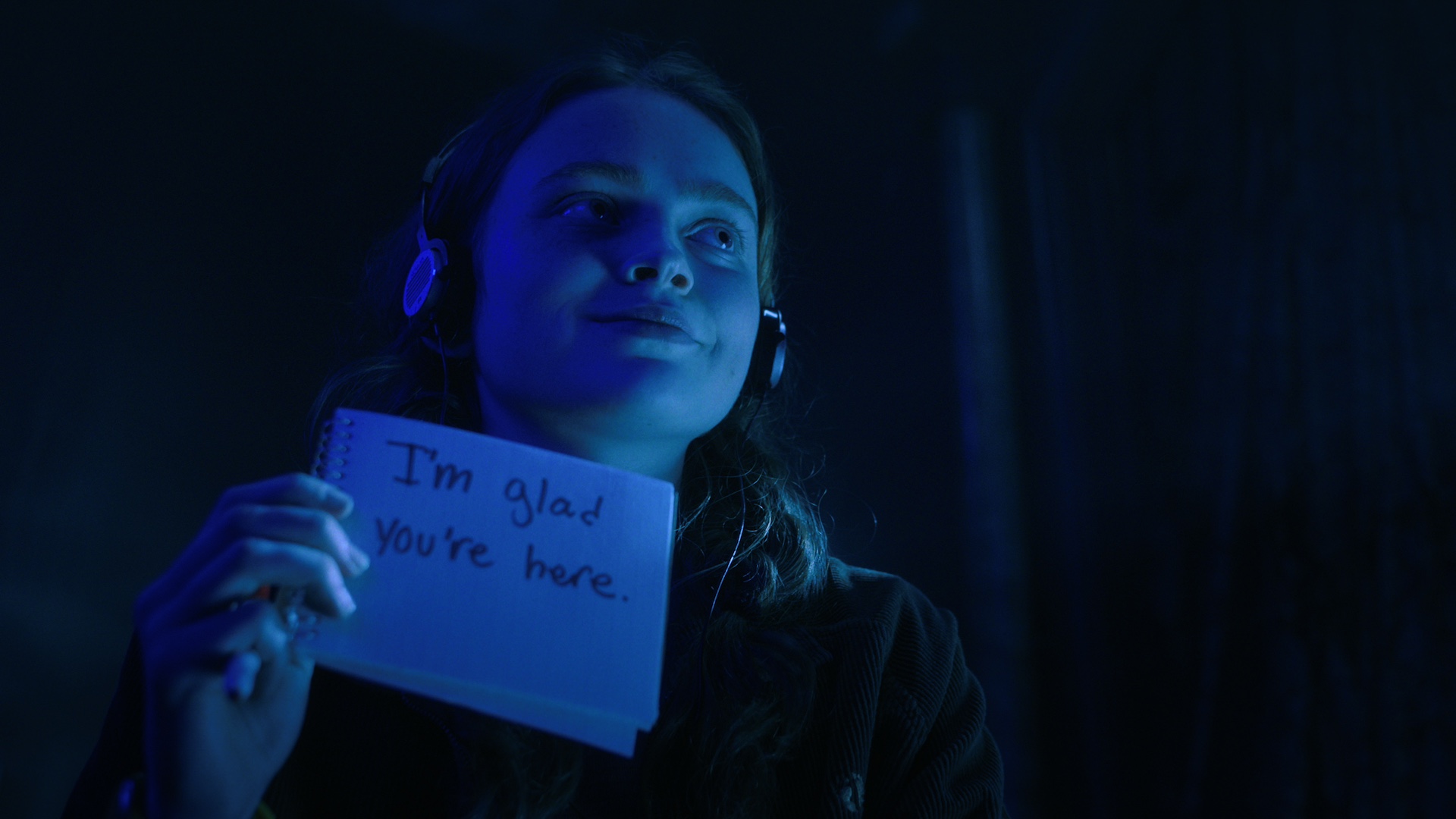

World enthusiasts who have been waiting patiently for Stranger Things sun Sadie Sink to appear on established have eventually received their hope as Spider-Man: Brand New Day shooting continues apace. However, a first glimpse of Sink in the MCU has only fueled further fan speculation about the character she plays in the wall-crawling fourquel, with Marvel Studios and Sony Pictures yet to provide any details.

The first set photo to appear shows Sink in costume talking to director Destin Daniel Cretton ( Shang-Chi and the Legend of the Ten Rings ) during a break during filming. It reveals that Sink has kept her customary red hair on the part. She also appears to be wearing camouflage pants and boots, but her oversized jacket obscures the rest of her outfit.

This Is Why Your Favorite Marvel Disney+ Show Never Got a Season 2

You might have wondered at some point why your favourite Marvel Disney + show didn’t receive a second time unless you’re a Loki lover. Fortunately, Brad Winderbaum, the mind of Streaming at Marvel Studios, has the answers. It is obvious that the studio always intended to continue producing the first Disney + films, including WandaVision, The Falcon, and The Winter Soldier.

This Is Why Your Favorite Marvel Disney + Show Never Got a Season 2 first appeared on Den of Geek.

World enthusiasts who have been waiting patiently for Stranger Things sun Sadie Sink to appear on established have eventually received their hope as Spider-Man: Brand New Day shooting continues apace. However, a first glimpse of Sink in the MCU has only fueled further fan speculation about the character she’s portraying in the wall-crawling fourquel, with Marvel Studios and Sony Pictures yet to provide any details.

The first set photo to appear shows Sink in costume and conversing with director Destin Daniel Cretton ( Shang-Chi and the Legend of the Ten Rings ) during a set break. It reveals that Sink has kept her customary red hair on the part. She also appears to be wearing camouflage pants and boots, but her oversized jacket obscures the rest of her outfit.

First Set Pics of Sadie Sink in Spider-Man: Brand New Day Spark Fan Theories

World enthusiasts who have been waiting patiently for Stranger Things star Sadie Sink to appear on established have eventually received their desire as the shooting for Spider-Man: Brand New Day continues momentum. However, a first glimpse of Sink in the MCU has only fueled further fan speculation about the woman she plays in the wall-crawling fourquel, [ …].

The first post from Den of Geek‘s first set photos of Sadie Sink in Spider-Man: Brand New Day Spark fan theories appeared first.

Fans of Marvel who have been anticipating Sadie Sink’s appearance on the set of Stranger Things are finally getting their wish as Spider-Man: Brand New Day filming continues apace. However, a first glimpse of Sink in the MCU has only fueled further fan speculation about the character she’s portraying in the wall-crawling fourquel, with Marvel Studios and Sony Pictures yet to provide any details.

During a set break during which the first set photo appears, Sink is seen in costume and conversing with director Destin Daniel Cretton ( Shang-Chi and the Legend of the Ten Rings ). It reveals that Sink has kept her customary red hair on the part. She also appears to be wearing camouflage pants and boots, but the rest of her outfit is obscured by her oversized jacket.

Marvel Reportedly Won’t Let Tom Holland Be James Bond

Parker is his title, Peter Parker. And nothing else. That’s the story that is being spread in The Sun, at least. The report states that Tom Holland has been ruled out of contention for the role of the next James Bond as a result of an unknown source in the paper’s claim that he has signed a deal with Marvel and Sony to perform Spider-Man. It’s not so]… ]

The second article on Den of Geek was titled Marvel ReportedlyWon’t Allow Tom Holland Get James Bond.

World enthusiasts who have been waiting patiently for Stranger Things star Sadie Sink to appear on established have eventually received their desire as the shooting for Spider-Man: Brand New Day continues momentum. However, a first glimpse of Sink in the MCU has only fueled further fan speculation about the character she plays in the wall-crawling fourquel, with Marvel Studios and Sony Pictures yet to provide any details.

The first set photo to appear shows Sink in costume talking to director Destin Daniel Cretton ( Shang-Chi and the Legend of the Ten Rings ) during a break during filming. It reveals that Sink has kept her customary red hair on the part. She also appears to be wearing camouflage pants and boots, but her oversized jacket obscures the rest of her outfit.

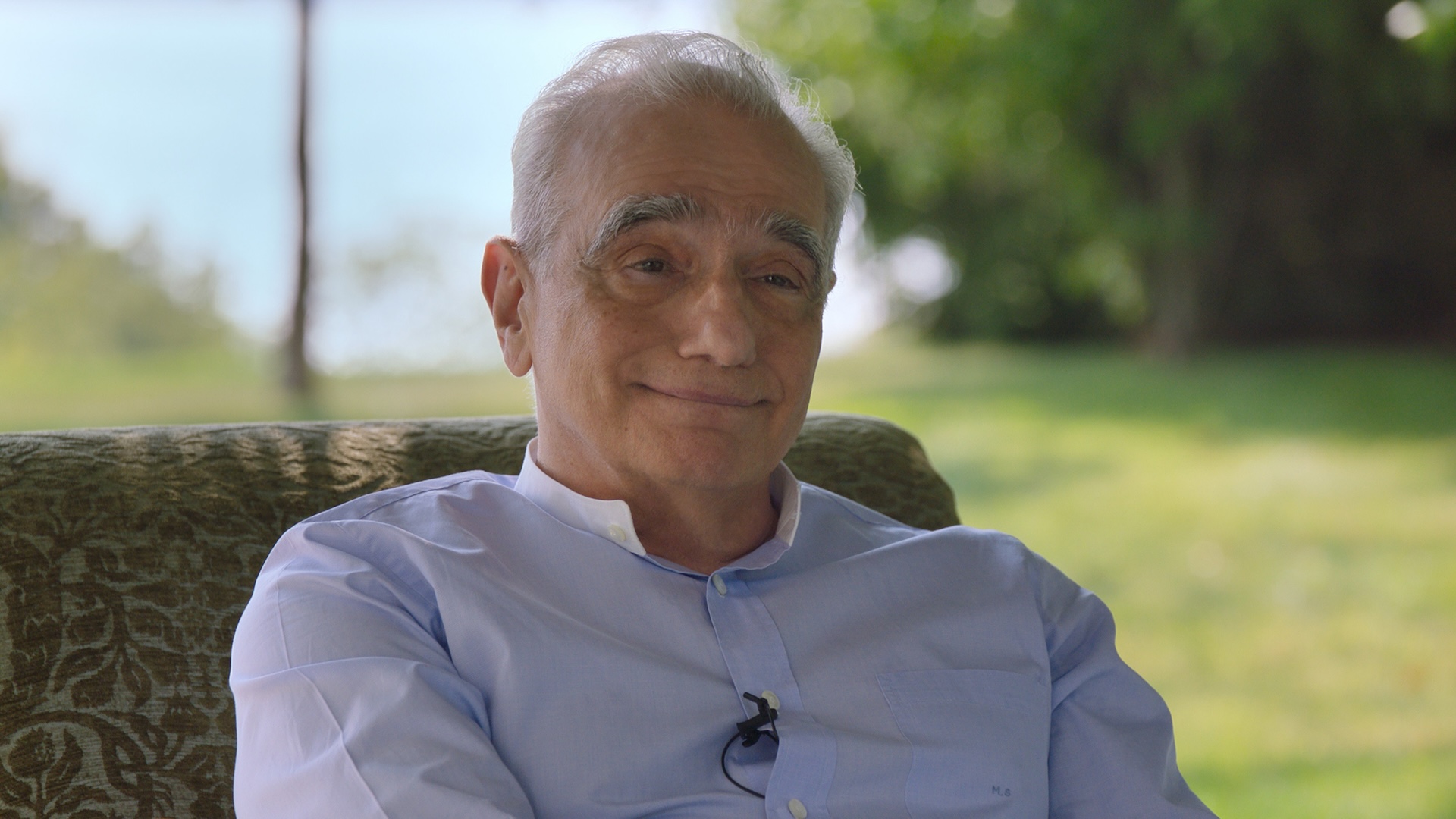

Even Martin Scorsese’s Life-Changing Bad Movie is Pretty Good

The brand-new Apple TV documentary Mr. Scorsese offers a wealth of fantastic anecdotes and fascinating insight into the storied history of the famous filmmaker. Unsurprisingly, but it’s interesting to note that Martin Scorsese didn’t start off making treasures like Taxi Driver and The Age of Innocence. Instead, he had to work up a little and go through some of the struggles he faced. ]

Also Martin Scorsese’s Life-Changing Bad Movie is Very Fine first appeared on Den of Geek.

World enthusiasts who have been waiting patiently for Stranger Things star Sadie Sink to appear on established have eventually received their desire as the shooting for Spider-Man: Brand New Day continues momentum. However, a first glimpse of Sink in the MCU has only fueled further fan speculation about the character she plays in the wall-crawling fourquel, with Marvel Studios and Sony Pictures yet to provide any details.

The first set photo to appear shows Sink in costume and conversing with director Destin Daniel Cretton ( Shang-Chi and the Legend of the Ten Rings ) during a set break. It reveals that Sink has kept her customary red hair on the part. She also appears to be wearing camouflage pants and boots, but the rest of her outfit is obscured by her oversized jacket.

Scott Derrickson Reveals the One Thing He Won’t Do for Black Phone 3

Many people have been killed by Scott Derrickson. And a amazing number of them were minors. Okay, no, Derrickson hasn’t really killed anyone, but he has imagined numerous murders and depicted them on screen, including the three disfigured tykes that the Grabber killed in Black Phone 2. Black Phone 2]… ]

Scott Derrickson Reveals the One Thing HeWon’t Do for Black Phone 3 primary appeared on Den of Geek.

World enthusiasts who have been waiting patiently for Stranger Things sun Sadie Sink to appear on established have eventually received their hope as Spider-Man: Brand New Day shooting continues apace. However, a first glimpse of Sink in the MCU has only fueled further fan speculation about the character she’s portraying in the wall-crawling fourquel, with Marvel Studios and Sony Pictures yet to provide any details.

The first set photo to appear shows Sink in costume talking to director Destin Daniel Cretton ( Shang-Chi and the Legend of the Ten Rings ) during a break during filming. It reveals that Sink has kept her customary red hair on the part. She also appears to be wearing camouflage pants and boots, but the rest of her outfit is obscured by her oversized jacket.

How Bruce Springsteen Stole the Title of His Hit Song From Paul Schrader

When cowboy chairman Paul Schrader discusses pop music these days, he’s typically praising Taylor Swift as” the lighting that gives meaning our lives.” However, Schrader had the Boss on his head when it was early 1980s. And in a recent discussion with Deadline, Schrader reveals how an abandoned video project starring Bruce Springsteen […]…

The first article on Den of Geek: How Bruce Springsteen Stole the Title of his hit track from Paul Schrader was originally published here.

World enthusiasts who have been waiting patiently for Stranger Things star Sadie Sink to appear on established have eventually received their desire as the shooting for Spider-Man: Brand New Day continues momentum. However, a first glimpse of Sink in the MCU only fueled further fan speculation about the character she portrays in the wall-crawling fourquel, with no details being made public by Marvel Studios and Sony Pictures.

The first set photo to appear shows Sink in costume and conversing with director Destin Daniel Cretton ( Shang-Chi and the Legend of the Ten Rings ) during a set break. It reveals that Sink has kept her customary red hair on the part. She also appears to be wearing camouflage pants and boots, but the rest of her outfit is obscured by her oversized jacket.

Designing for the Unexpected

Although I’m not sure when I first heard this statement, it has stuck with me over the centuries. How do you generate solutions for scenarios you can’t think? Or create products that function on products that have not yet been created?

Flash, Photoshop, and flexible style

When I first started designing sites, my go-to technology was Photoshop. I set about making a layout that I would eventually decline content into a 960px cloth. The growth phase was about attaining pixel-perfect precision using set widths, fixed levels, and absolute placement.

All of this was altered by Ethan Marcotte’s speak at An Event Apart and the subsequent article in A Checklist Off in 2010. I was sold on responsive pattern as soon as I heard about it, but I was even terrified. The pixel-perfect models full of special figures that I had formerly prided myself on producing were no longer good enough.

My first encounter with reactive style didn’t help my fear. My second project was to get an active fixed-width website and make it reactive. I quickly realized that you didn’t just put responsiveness at the end of a task. To make smooth design, you need to prepare throughout the style stage.

A new way to style

Making information accessible to all devices a priority when designing responsive or smooth websites has always been the goal. It relies on the use of percentage-based design, which I immediately achieved with local CSS and power groups:

.column-span-6 { width: 49%; float: left; margin-right: 0.5%; margin-left: 0.5%;}.column-span-4 { width: 32%; float: left; margin-right: 0.5%; margin-left: 0.5%;}.column-span-3 { width: 24%; float: left; margin-right: 0.5%; margin-left: 0.5%;}Therefore using Sass to re-use repeated slabs of code and transition to more semantic premium:

.logo { @include colSpan(6);}.search { @include colSpan(3);}.social-share { @include colSpan(3);}Media concerns

The next ingredient for reactive design is press queries. Without them, content would shrink to fit the available space, regardless of whether it remained readable ( The exact opposite issue resulted from the development of a mobile-first approach ).

Media concerns prevented this by allowing us to add breakpoints where the design could adapt. Like most people, I started out with three breakpoints: one for desktop, one for tablets, and one for mobile. Over the years, I added more and more for phablets, wide screens, and so on.

For years, I happily worked this way and improved both my design and front-end skills in the process. The only problem I encountered was making changes to content, since with our Sass grid system in place, there was no way for the site owners to add content without amending the markup—something a small business owner might struggle with. This is because each row in the grid was defined using a div as a container. Adding content meant creating new row markup, which requires a level of HTML knowledge.

String premium was a mainstay of early flexible design, present in all the frequently used systems like Bootstrap and Skeleton.

1 of 7 2 of 7 3 of 7 4 of 7 5 of 7 6 of 7 7 of 7 Another difficulty arose as I moved from a design firm building websites for smaller- to medium-sized companies, to larger in-house teams where I worked across a collection of related sites. In those capacities, I began to work many more with washable pieces.

Our rely on multimedia queries resulted in parts that were tied to frequent window sizes. If the goal of part libraries is modify, then this is a real problem because you can just use these components if the devices you’re designing for correspond to the viewport sizes used in the pattern library—in the process never really hitting that “devices that don’t already occur” goal.

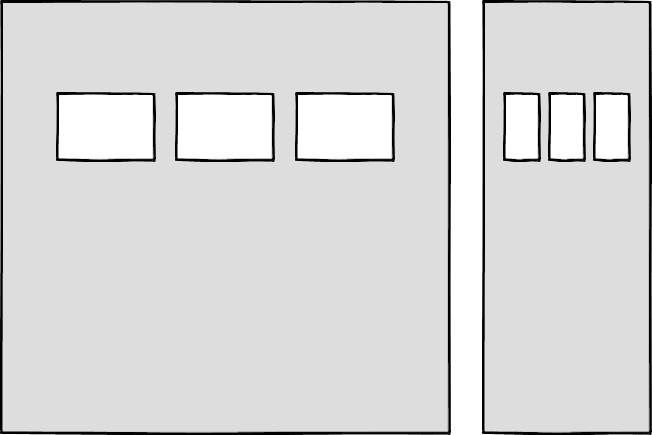

Then there’s the problem of space. Media concerns allow components to adapt based on the viewport size, but what if I put a component into a sidebar, like in the figure below?

Container queries: our savior or a false dawn?

Container queries have long been touted as an improvement upon media queries, but at the time of writing are unsupported in most browsers. Workarounds for JavaScript exist, but they can lead to dependencies and compatibility issues. The basic theory underlying container queries is that elements should change based on the size of their parent container and not the viewport width, as seen in the following illustrations.

One of the biggest arguments in favor of container queries is that they help us create components or design patterns that are truly reusable because they can be picked up and placed anywhere in a layout. This is an important step in moving toward a form of component-based design that works at any size on any device.

In other words, responsive elements are meant to replace responsive layouts.

Container queries will help us move from designing pages that respond to the browser or device size to designing components that can be placed in a sidebar or in the main content, and respond accordingly.

We still use layout to determine when a design needs to adapt, which is my concern. This approach will always be restrictive, as we will still need pre-defined breakpoints. For this reason, my main question with container queries is, How would we decide when to change the CSS used by a component?

The best place to make that choice is probably a component library that is disconnected from context and real content.

As the diagrams below illustrate, we can use container queries to create designs for specific container widths, but what if I want to change the design based on the image size or ratio?

In this example, the dimensions of the container are not what should dictate the design, rather, the image is.

Without reliable cross-browser support, it’s difficult to say for certain whether container queries will succeed. Responsive component libraries would definitely evolve how we design and would improve the possibilities for reuse and design at scale. However, we might always need to modify these elements to fit our content.

CSS is changing

Whilst the container query debate rumbles on, there have been numerous advances in CSS that change the way we think about design. The days of fixed-width elements measured in pixels and floated div elements used to cobble layouts together are long gone, consigned to history along with table layouts. Flexbox and CSS Grid have revolutionized layouts for the web. We can now create elements that wrap onto new rows when they run out of space, not when the device changes.

.wrapper { display: grid; grid-template-columns: repeat(auto-fit, 450px); gap: 10px;}The repeat() function paired with auto-fit or auto-fill allows us to specify how much space each column should use while leaving it up to the browser to decide when to spill the columns onto a new line. Similar things can be achieved with Flexbox, as elements can wrap over multiple rows and “flex” to fill available space.

.wrapper { display: flex; flex-wrap: wrap; justify-content: space-between;}.child { flex-basis: 32%; margin-bottom: 20px;}You don’t need to wrap elements in container rows, which is the biggest benefit of all of this. Without rows, content isn’t tied to page markup in quite the same way, allowing for removals or additions of content without additional development.

This is a big step forward when it comes to creating designs that allow for evolving content, but the real game changer for flexible designs is CSS Subgrid.

Remember the days of crafting perfectly aligned interfaces, only for the customer to add an unbelievably long header almost as soon as they’re given CMS access, like the illustration below?

Subgrid allows elements to respond to adjustments in their own content and in the content of sibling elements, helping us create designs more resilient to change.

.wrapper { display: grid; grid-template-columns: repeat(auto-fit, minmax(150px, 1fr)); grid-template-rows: auto 1fr auto; gap: 10px;}.sub-grid { display: grid; grid-row: span 3; grid-template-rows: subgrid; /* sets rows to parent grid */}CSS Grid allows us to separate layout and content, thereby enabling flexible designs. Meanwhile, Subgrid allows us to create designs that can adapt in order to suit morphing content. Subgrid is only supported by Firefox at the time of writing, but the above code can be implemented behind an @supports feature query.

Intrinsic layouts

I’d be remiss not to mention intrinsic layouts, a term used by Jen Simmons to describe a mix of contemporary and traditional CSS features used to create layouts that respond to available space.

Responsive layouts have flexible columns using percentages. Intrinsic layouts, on the other hand, use the fr unit to create flexible columns that won’t ever shrink so much that they render the content illegible.

frunits is a statement that says,” I want you to distribute the extra space in this way, but never make it smaller than the content that is inside.”

—Jen Simmons,” Designing Intrinsic Layouts”

Intrinsic layouts can also make use of a mix of fixed and flexible units, letting the content choose how much space it occupies.

What makes intrinsic design stand out is that it not only creates designs that can withstand future devices but also helps scale design without losing flexibility. Without having to have the same breakpoints or content as in the previous implementation, components and patterns can be removed and reused.

We can now create designs that adapt to the space they have, the content within them, and the content around them. We can create responsive components using an intrinsic approach without relying on container queries.

Another 2010 moment?

This intrinsic approach should in my view be every bit as groundbreaking as responsive web design was ten years ago. It’s another “everything changed” moment for me.

But it doesn’t seem to be moving quite as fast, I haven’t yet had that same career-changing moment I had with responsive design, despite the widely shared and brilliant talk that brought it to my attention.

One possible explanation for that might be that I now work for a sizable company, which is significantly different from the role I held as a design agency in 2010: In my agency days, every new project was a clean slate, a chance to try something new. Nowadays, projects use existing tools and frameworks and are often improvements to existing websites with an existing codebase.

Another possibility is that I’m now more prepared for change. In 2010 I was new to design in general, the shift was frightening and required a lot of learning. Additionally, an intrinsic approach isn’t exactly all-new; it’s about applying existing skills and CSS knowledge in a unique way.

You can’t framework your way out of a content problem

Another reason for the slightly slower adoption of intrinsic design could be the lack of quick-fix framework solutions available to kick-start the change.

Ten years ago, responsive grid systems were everywhere. With a framework like Bootstrap or Skeleton, you had a responsive design template at your fingertips.

Because the benefit of having a selection of units is a hindrance when it comes to creating layout templates, intrinsic design and frameworks do not go hand in hand quite as well. The beauty of intrinsic design is combining different units and experimenting with techniques to get the best for your content.

And then there are design tools. We probably all used Photoshop templates for desktop, tablet, and mobile devices at some point in our careers to drop designs in and demonstrate how the site would look at each of the three stages.

How do you do that now, with each component responding to content and layouts flexing as and when they need to? This kind of design must take place in the browser, which is something I’m very fond of.

The debate about “whether designers should code” is another that has rumbled on for years. When designing a digital product, we should, at the very least, design for a best- and worst-case scenario when it comes to content. It’s not ideal to implement this in a graphics-based software package. In code, we can add longer sentences, more radio buttons, and extra tabs, and watch in real time as the design adapts. Does it continue to function? Is the design too reliant on the current content?

Personally, I look forward to the day intrinsic design is the standard for design, when a design component can be truly flexible and adapt to both its space and content with no reliance on device or container dimensions.

Content should come first

Content is not constant. After all, to design for the unanticipated or unexpected, we must take into account changes in content, such as in our earlier Subgrid card illustration, which allowed the cards to make adjustments to both their own and sibling elements.

Thankfully, there’s more to CSS than layout, and plenty of properties and values can help us put content first. Subgrid and pseudo-elements like ::first-line and ::first-letter help to separate design from markup so we can create designs that allow for changes.

Instead of dated markup tricks like this —

First line of text with different styling...

—we can target content based on where it appears.

.element::first-line { font-size: 1.4em;}.element::first-letter { color: red;}Much bigger additions to CSS include logical properties, which change the way we construct designs using logical dimensions (start and end) instead of physical ones (left and right), something CSS Grid also does with functions like min(), max(), and clamp().

This flexibility allows for directional changes according to content, a common requirement when we need to present content in multiple languages. In the past, this was often achieved with Sass mixins but was often limited to switching from left-to-right to right-to-left orientation.

Directional variables must be set in the Sass version.

$direction: rtl;$opposite-direction: ltr;$start-direction: right;$end-direction: left;These variables can be used as values—

body { direction: $direction; text-align: $start-direction;}—or as real estate.

margin-#{$end-direction}: 10px;padding-#{$start-direction}: 10px;However, now we have native logical properties, removing the reliance on both Sass ( or a similar tool ) and pre-planning that necessitated using variables throughout a codebase. These properties also start to break apart the tight coupling between a design and strict physical dimensions, creating more flexibility for changes in language and in direction.

margin-block-end: 10px;padding-block-start: 10px;There are also native start and end values for properties like text-align, which means we can replace text-align: right with text-align: start.

Like the earlier examples, these properties help to build out designs that aren’t constrained to one language, the design will reflect the content’s needs.

Fluid and fixed

We briefly covered the power of combining fixed widths with fluid widths with intrinsic layouts. The min() and max() functions are a similar concept, allowing you to specify a fixed value with a flexible alternative.

For min() this means setting a fluid minimum value and a maximum fixed value.

.element { width: min(50%, 300px);}The element in the figure above will be 50 % of its container as long as the element’s width doesn’t exceed 300px.

For max() we can set a flexible max value and a minimum fixed value.

.element { width: max(50%, 300px);}Now the element will be 50 % of its container as long as the element’s width is at least 300px. This means we can set limits but allow content to react to the available space.

The clamp() function builds on this by allowing us to set a preferred value with a third parameter. Now we can allow the element to shrink or grow if it needs to without getting to a point where it becomes unusable.

.element { width: clamp(300px, 50%, 600px);}This time, the element’s width will be 50 % of its container’s preferred value, with no exceptions for 300px and 600px.

With these techniques, we have a content-first approach to responsive design. We can separate content from markup, meaning the changes users make will not affect the design. By anticipating unforeseen language or direction changes, we can begin creating future-proofing designs. And we can increase flexibility by setting desired dimensions alongside flexible alternatives, allowing for more or less content to be displayed correctly.

First, the situation

Thanks to what we’ve discussed so far, we can cover device flexibility by changing our approach, designing around content and space instead of catering to devices. But what about that last bit of Jeffrey Zeldman’s quote,”… situations you haven’t imagined”?

Rather than someone using a mobile phone and moving through a crowded street in glaring sunshine, it’s a very different design to be done for someone using a desktop computer. Situations and environments are hard to plan for or predict because they change as people react to their own unique challenges and tasks.

Choice is so crucial because of this. One size never fits all, so we need to design for multiple scenarios to create equal experiences for all our users.

Thankfully, there is a lot we can do to provide choice.

Responsible design

” There are parts of the world where mobile data is prohibitively expensive, and where there is little or no broadband infrastructure”.

” I Used the Web for a Day on a 50 MB Budget“

Chris Ashton

One of the biggest assumptions we make is that people interacting with our designs have a good wifi connection and a wide screen monitor. However, our users may be commuters using smaller mobile devices that may experience drops in connectivity while traveling on trains or other modes of transportation. There is nothing more frustrating than a web page that won’t load, but there are ways we can help users use less data or deal with sporadic connectivity.

The srcset attribute allows the browser to decide which image to serve. This means we can create smaller ‘cropped’ images to display on mobile devices in turn using less bandwidth and less data.

The preload attribute can also help us to think about how and when media is downloaded. It can be used to tell a browser about any critical assets that need to be downloaded with high priority, improving perceived performance and the user experience.

There’s also native lazy loading, which indicates assets that should only be downloaded when they are needed.

With srcset, preload, and lazy loading, we can start to tailor a user’s experience based on the situation they find themselves in. What none of this does, however, is allow the user themselves to decide what they want downloaded, as the decision is usually the browser’s to make.

So how can we put users in control?

The return of media inquiries

Media concerns have always been about much more than device sizes. They allow content to adapt to different situations, with screen size being just one of them.

We’ve long been able to check for media types like print and speech and features such as hover, resolution, and color. These checks allow us to provide options that suit more than one scenario, it’s less about one-size-fits-all and more about serving adaptable content.

The Media Queries Level 5 spec is still being developed as of this writing. It introduces some really exciting queries that in the future will help us design for multiple other unexpected situations.

For instance, a light-level option lets you alter a user’s style when they are in the dark or in the sun. Paired with custom properties, these features allow us to quickly create designs or themes for specific environments.

@media (light-level: normal) { --background-color: #fff; --text-color: #0b0c0c; }@media (light-level: dim) { --background-color: #efd226; --text-color: #0b0c0c;}Another key feature of the Level 5 spec is personalization. Instead of creating designs that are the same for everyone, users can choose what works for them. This is achieved by using features like prefers-reduced-data, prefers-color-scheme, and prefers-reduced-motion, the latter two of which already enjoy broad browser support. These features tap into preferences set via the operating system or browser so people don’t have to spend time making each site they visit more usable.

Media concerns like this go beyond choices made by a browser to grant more control to the user.

Expect the unexpected

In the end, we should always anticipate that things will change. Devices in particular change faster than we can keep up, with foldable screens already on the market.

We can design for content, but we can’t do it for this constantly changing landscape. By putting content first and allowing that content to adapt to whatever space surrounds it, we can create more robust, flexible designs that increase the longevity of our products.

A lot of the CSS discussed here is about moving away from layouts and putting content at the heart of design. There are still many more things we can do to adopt a more intrinsic approach, from responsive to fluid and fixed. Even better, we can test these techniques during the design phase by designing in-browser and watching how our designs adapt in real-time.

When it comes to unexpected circumstances, we need to make sure our goods are accessible whenever and wherever needed. We can move closer to achieving this by involving users in our design decisions, by creating choice via browsers, and by giving control to our users with user-preference-based media queries.

Good design for the unexpected should allow for change, provide choice, and give control to those we serve: our users themselves.

Voice Content and Usability

We’ve been conversing for a long time. Whether to present information, perform transactions, or just to check in on one another, people have yammered aside, chattering and gesticulating, through spoken discussion for many generations. Only recently have we begun to write our discussions, and only recently have we outsourced them to the system, a system that exhibits a significantly higher affection for written letter than for the vernacular rigors of spoken language.

Laptops have trouble because between spoken and written speech, talk is more primitive. Machines must wrestle with the complexity of human statement, including the disfluencies and pauses, the gestures and body speech, and the variations in term choice and spoken dialect, which may impede even the most skillfully crafted human-computer interaction. In the human-to-human scenario, spoken language also has the privilege of face-to-face contact, where we can readily interpret nonverbal social cues.

In contrast, written language develops its own fossil record of dated terms and phrases as we record it and retain usages long after they are no longer relevant in spoken communication ( for example, the salutation” To whom it may concern” ). Because it tends to be more consistent, polished, and formal, written text is fundamentally much easier for machines to parse and understand.

Spoken language is not a luxury in this regard. Besides the nonverbal cues that decorate conversations with emphasis and emotional context, there are also verbal cues and vocal behaviors that modulate conversation in nuanced ways: how something is said, not what. Our spoken language reaches far beyond what the written word can ever deliver, whether it’s rapid-fire, low-pitched, high-decibel, sarcastic, stilted, or sighing. So when it comes to voice interfaces—the machines we conduct spoken conversations with—we face exciting challenges as designers and content strategists.

Voice-to-voice interactions

We interact with voice interfaces for a variety of reasons, but according to Michael McTear, Zoraida Callejas, and David Griol in The Conversational Interface, those motivations by and large mirror the reasons we initiate conversations with other people, too ( ). We typically strike up a discussion in the following ways:

- we need something done ( such as a transaction ),

- we want to know something, some kind of information, or

- we are social beings and want someone to talk to ( conversation for conversation’s sake ).

These three categories, which I refer to as transactional, informational, and prosocial, also apply to virtually every voice interaction: a single conversation that begins with the voice interface’s first greeting and ends with the user leaving the interface. Note here that a conversation in our human sense—a chat between people that leads to some result and lasts an arbitrary length of time—could encompass multiple transactional, informational, and prosocial voice interactions in succession. In other words, a voice interaction is a conversation, but it is not always just one voice interaction.

Purely prosocial conversations are more gimmicky than captivating in most voice interfaces, because machines don’t yet have the capacity to really want to know how we’re doing and to do the sort of glad-handing humans crave. Users are also debating whether or not they prefer the kind of organic human conversation that starts with a prosocial voiceover and progresses seamlessly into other types. In fact, in Voice User Interface Design, Michael Cohen, James Giangola, and Jennifer Balogh recommend sticking to users ‘ expectations by mimicking how they interact with other voice interfaces rather than trying too hard to be human—potentially alienating them in the process ( ).

That leaves two different types of conversations we can have with one another that a voice interface can also have easily, including one that is transactional and one that is informational, teaching us something new ( “discuss a musical” ).

Transactional voice interactions

When you order a Hawaiian pizza with extra pineapple, you’re typically having a conversation and a voice interaction when you’re tapping buttons on a food delivery app. Even when we walk up to the counter and place an order, the conversation quickly pivots from an initial smattering of neighborly small talk to the real mission at hand: ordering a pizza ( generously topped with pineapple, as it should be ).

Alison: Hey, how are things going?

Burhan: Hi, welcome to Crust Deluxe! It’s chilly outside. How can I help you?

Alison, can I get a pineapple-onion pizza in Hawaii?

Burhan: Sure, what size?

Large, Alison.

Burhan: Anything else?

Alison: No, that’s it.

Burhan: Something to drink?

I’ll have a bottle of Coke, Alison.

Burhan: You got it. It will cost about$ 15 and take fifteen minutes to complete.

Each progressive disclosure in this transactional conversation reveals more and more of the desired outcome of the transaction: a service rendered or a product delivered. Conversations that are transactional have certain characteristics: they are direct, concise, and cost-effective. They quickly dispense with pleasantries.

Informational voice interactions

Meanwhile, some conversations are primarily about obtaining information. Alison might visit Crust Deluxe with the sole intention of placing an order, but she might not want to leave with a pizza at all. She might be just as interested in whether they serve halal or kosher dishes, gluten-free options, or something else. Even though we have a prosocial mini-conversation once more at the beginning to establish politeness, we’re after much more.

Alison: Hey, how are things going?

Burhan: Hi, welcome to Crust Deluxe! It’s chilly outside. How can I help you?

Alison: Can I ask a few questions?

Burhan: Of course! Go right ahead.

Do you have any halal options available on the menu, Alison?

Burhan: Absolutely! On request, we can make any pie halal. We also have lots of vegetarian, ovo-lacto, and vegan options. Do you have any other dietary restrictions in mind?

Alison: What about gluten-free pizzas?

Burhan: For both our deep-dish and thin-crust pizzas, we can definitely make a gluten-free crust for you, without a problem. Anything else I can answer for you?

Alison: That’s it for the moment. Good to know. Thank you!

Burhan: Anytime, come back soon!

This dialogue is radically different. Here, the goal is to get a certain set of facts. Informational conversations are research expeditions that seek the truth through information gathering. Voice interactions that are informational might be more long-winded than transactional conversations by necessity. Responses are typically longer, more in-depth, and carefully communicated so that the customer is aware of the important lessons.

Voice Interfaces

Voice interfaces, in essence, use speech to assist users in accomplishing their objectives. But simply because an interface has a voice component doesn’t mean that every user interaction with it is mediated through voice. We’re most concerned with pure voice interfaces, which depend entirely on spoken conversation and lack any visual component, making multimodal voice interfaces much more nuanced and challenging to deal with because they can lean on visual components like screens as crutches.

Though voice interfaces have long been integral to the imagined future of humanity in science fiction, only recently have those lofty visions become fully realized in genuine voice interfaces.

IVR ( interactive voice response ) systems

Though written conversational interfaces have been fixtures of computing for many decades, voice interfaces first emerged in the early 1990s with text-to-speech ( TTS ) dictation programs that recited written text aloud, as well as speech-enabled in-car systems that gave directions to a user-provided address. We became familiar with the first real voice interfaces that could actually be spoken to without having to deal with overburdened customer service representatives as a result of the development of interactive voice response ( IVR ) systems.

IVR systems allowed organizations to reduce their reliance on call centers but soon became notorious for their clunkiness. When you call an airline or hotel company, which is a common practice in the corporate world, these systems were primarily intended as metaphorical switchboards to direct customers to a real phone agent (” Say Reservations to book a flight or check an itinerary” ), which are more likely to happen when you call one. Despite their functional issues and users ‘ frustration with their inability to speak to an actual human right away, IVR systems proliferated in the early 1990s across a variety of industries (, PDF).

IVR systems have a reputation for having less scintillating conversations than we’re used to in real life ( or even in science fiction ), despite being extremely repetitive and monotonous conversations that typically don’t veer from a single format.

Screen readers

The invention of the screen reader, a tool that converts visual content into synthesized speech, was a development of IVR systems in parallel. For Blind or visually impaired website users, it’s the predominant method of interacting with text, multimedia, or form elements. Perhaps the closest thing we have today to an out-of-the-box delivery of content via voice is represented by screen readers.

Among the first screen readers known by that moniker was the Screen Reader for the BBC Micro and NEEC Portable developed by the Research Centre for the Education of the Visually Handicapped (RCEVH) at the University of Birmingham in 1986 ( ). The first IBM Screen Reader for text-based computers was created by Jim Thatcher in the same year, which was later recreated for a computer with graphical user interfaces ( GUIs ) ( ).

With the rapid growth of the web in the 1990s, the demand for accessible tools for websites exploded. Screen readers started facilitating quick interactions with web pages that ostensibly allow disabled users to traverse the page as an aural and temporal space rather than a visual and physical one with the introduction of semantic HTML and especially ARIA roles in 2008, enabling speedy interactions with the pages. In other words, screen readers for the web “provide mechanisms that translate visual design constructs—proximity, proportion, etc. in A List Apart, writes Aaron Gustafson, “into useful information.” ” At least they do when documents are authored thoughtfully” ( ).

There’s a big deal with screen readers: they’re difficult to use and relentlessly verbose, despite being incredibly instructive for voice interface designers. The visual structures of websites and web navigation don’t translate well to screen readers, sometimes resulting in unwieldy pronouncements that name every manipulable HTML element and announce every formatting change. Working with web-based interfaces takes a cognitive toll for many screen reader users.

In Wired, accessibility advocate and voice engineer Chris Maury considers why the screen reader experience is ill-suited to users relying on voice:

I hated the way Screen Readers operated from the beginning. Why are they designed the way they are? It makes no sense to present information visually before converting it to audio only after that. All of the time and energy that goes into creating the perfect user experience for an app is wasted, or even worse, adversely impacting the experience for blind users. __ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

In many cases, well-designed voice interfaces can speed users to their destination better than long-winded screen reader monologues. After all, users of the visual interface have the advantage of freely scurrying around the viewport to find information without worrying about it. Blind users, meanwhile, are obligated to listen to every utterance synthesized into speech and therefore prize brevity and efficiency. Users with disabilities who have long had no choice but to use clumsy screen readers might find that voice interfaces, especially more contemporary voice assistants, provide a more streamlined experience.

Voice assistants

Many of us immediately associate voice assistants with the popular subset of voice interfaces found in living rooms, smart homes, and offices with the film Star Trek or with Majel Barrett’s voice as the omniscient computer. Voice assistants are akin to personal concierges that can answer questions, schedule appointments, conduct searches, and perform other common day-to-day tasks. And because of their assistive potential, they are quickly gaining more and more attention from accessibility advocates.

Before the earliest IVR systems found success in the enterprise, Apple published a demonstration video in 1987 depicting the Knowledge Navigator, a voice assistant that could transcribe spoken words and recognize human speech to a great degree of accuracy. Then, in 2001, Tim Berners-Lee and others created their vision for a Semantic Web “agent” that would carry out routine tasks like” checking calendars, making appointments, and finding locations” (, behind paywall ). It wasn’t until 2011 that Apple’s Siri finally entered the picture, making voice assistants a tangible reality for consumers.

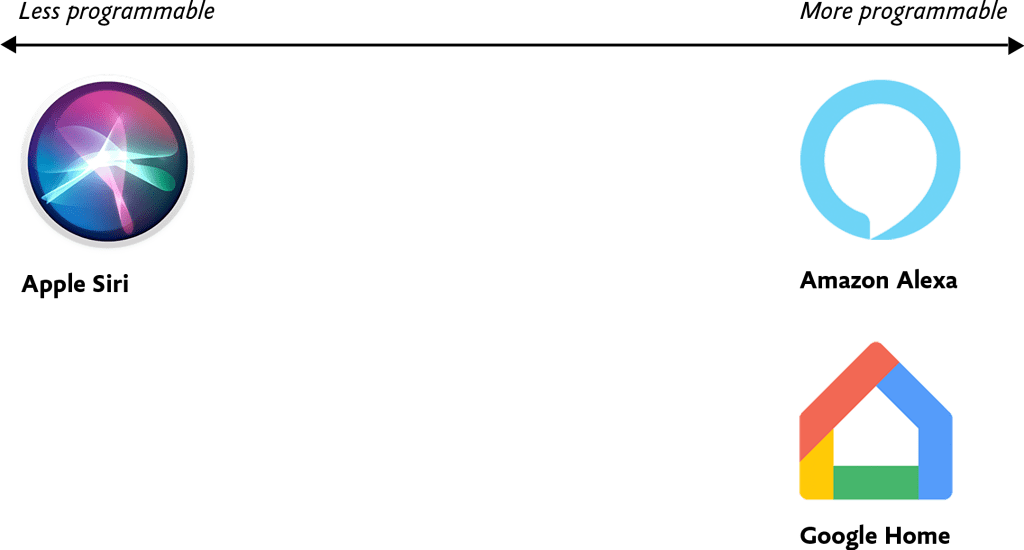

There is a significant variation in how programmable and customizable some voice assistants are compared to others due to the sheer number of voice assistants available today ( Fig. 1 ). At one extreme, everything except vendor-provided features is locked down, for example, at the time of their release, the core functionality of Apple’s Siri and Microsoft’s Cortana couldn’t be extended beyond their existing capabilities. There are no other means of developers communicating with Siri at a low level, aside from predefined categories of tasks like messaging, hailing rideshares, making restaurant reservations, and other things, which are still possible today.

At the opposite end of the spectrum, voice assistants like Amazon Alexa and Google Home offer a core foundation on which developers can build custom voice interfaces. For this reason, developers who feel stifled by the limitations of Siri and Cortana are increasingly using programmable voice assistants that are capable of customization and extensibility. Amazon offers the Alexa Skills Kit, a developer framework for building custom voice interfaces for Amazon Alexa, while Google Home offers the ability to program arbitrary Google Assistant skills. Users today have the option to choose from among the thousands of custom-built skills available in the Google Assistant and Amazon Alexa ecosystems.

As businesses like Amazon, Apple, Microsoft, and Google continue to occupy their positions, they’re also selling and open-sourcing an unheard array of tools and frameworks for designers and developers that aim to make creating voice interfaces as simple as possible, even without code.

Often by necessity, voice assistants like Amazon Alexa tend to be monochannel—they’re tightly coupled to a device and can’t be accessed on a computer or smartphone instead. In contrast, many development platforms, such as Google’s Dialogflow, have omnichannel capabilities that allow users to create a single conversational interface that then manifests as a voice interface, textual chatbot, and IVR system upon deployment. I don’t prescribe any specific implementation approaches in this design-focused book, but in Chapter 4 we’ll get into some of the implications these variables might have on the way you build out your design artifacts.

Voice Content

Simply put, voice content is content delivered through voice. Voice content must be free-flowing and organic, contextless and concise in order to preserve what makes human conversation so compelling in the first place. Everything written content is not.

Our world is replete with voice content in various forms: screen readers reciting website content, voice assistants rattling off a weather forecast, and automated phone hotline responses governed by IVR systems. We’re most concerned with the content in this book being delivered auditorically, not as an option but as a necessity.

For many of us, our first foray into informational voice interfaces will be to deliver content to users. There is only one issue: any content we already have isn’t in any way suitable for this new environment. So how do we make the content trapped on our websites more conversational? And how do we create fresh copy that works with voice-recognition?

Lately, we’ve begun slicing and dicing our content in unprecedented ways. Websites are, in many ways, massive vaults of what I call macrocontent: lengthy prose that can last for miles in a browser window while being viewed in microfilm format in newspaper archives. Back in 2002, well before the present-day ubiquity of voice assistants, technologist Anil Dash defined microcontent as permalinked pieces of content that stay legible regardless of environment, such as email or text messages:

An example of microcontent can be a day’s weather forecast [sic], the arrival and departure times for an airplane flight, an abstract from a lengthy publication, or a single instant message. __ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

I would update Dash’s definition of microcontent to include all instances of bite-sized content that goes beyond written communiqués. After all, today we encounter microcontent in interfaces where a small snippet of copy is displayed alone, unmoored from the browser, like a textbot confirmation of a restaurant reservation. Informing delivery channels both established and novel, Microcontent provides the best opportunity to find out how your content can be stretched to the limits of its potential.

As microcontent, voice content is unique because it’s an example of how content is experienced in time rather than in space. We can instantly see when the next train is coming from a digital sign underground, but voice interfaces keep our attention occupied for so long that screen reader users are all too familiar.

Because microcontent is fundamentally made up of isolated blobs with no relation to the channels where they’ll eventually end up, we need to ensure that our microcontent truly performs well as voice content—and that means focusing on the two most important traits of robust voice content: voice content legibility and voice content discoverability.

Our voice content’s legibility and discoverability in general both depend on how it manifests in terms of perceived space and time.

Sustainable Web Design, An Excerpt

Some wealthy runners had come to the conclusion that it was impossible to run a mile in less than four hours in the 1950s. Riders had been attempting it since the later 19th century and were beginning to draw the conclusion that the human body just wasn’t built for the job.

But Roger Bannister surprised people on May 6, 1956. It was a cold, damp morning in Oxford, England—conditions no one expected to give themselves to record-setting—and but Bannister did really that, running a mile in 3: 59.4 and becoming the first people in the history books to run a mile in under four hours.

The world today knew that the four-minute hour was possible because of this change in the standard. Bannister’s history lasted just forty-six days, when it was snatched aside by American sprinter John Landy. Finally, in the same race, three athletes all managed to cross the four-minute challenge. Since therefore, over 1, 400 walkers have actually run a mile in under four days, the current document is 3: 43.13, held by Moroccan performer Hicham El Guerrouj.

We can do a lot more with what we think is possible, and we can only do it if we see that someone else has already done it. As with people running speed, there are also difficult limits on how a website can accomplish.

Establishing requirements for a green website

The key indicators of climate performance in most big sectors are pretty well established, such as power per square metre for homes and miles per gallon for cars. The tools and methods for calculating those measures are standardized as well, which keeps everyone on the same site when doing economic evaluations. But, we are not required to follow any specific environmental standards in the world of websites and apps, and we have only recently developed the tools and methods to do so.

The main objective in green web layout is to reduce carbon emissions. However, it’s nearly impossible to accurately assess the amount of CO2 that a website merchandise produces. We can’t measure the pollutants coming out of the exhaust valves on our devices. The pollution coming from power plants that burn coal and oil are far apart, out of sight, and out of mind. We have no way to track the particles from a website or app up to the power station where the light is being generated and really know the exact amount of house oil produced. What then do we do?

If we can‘t measure the actual carbon emissions, then we need to get what we can estimate. The following are the main elements that could be used as coal pollution gauges:

- Transfer of data

- Electricity’s carbon power

Let’s take a look at how we can use these indicators to calculate the energy use, and in turn the carbon footprint, of the sites and web applications we create.

Transfer of data

Most researchers use kilowatt-hours per gigabyte (k Wh/GB ) as a metric of energy efficiency when measuring the amount of data transferred over the internet when a website or application is used. This serves as a wonderful example of how much energy is consumed and how much carbon is released. As a rule of thumb, the more files transferred, the more electricity used in the data center, telecoms systems, and end users products.

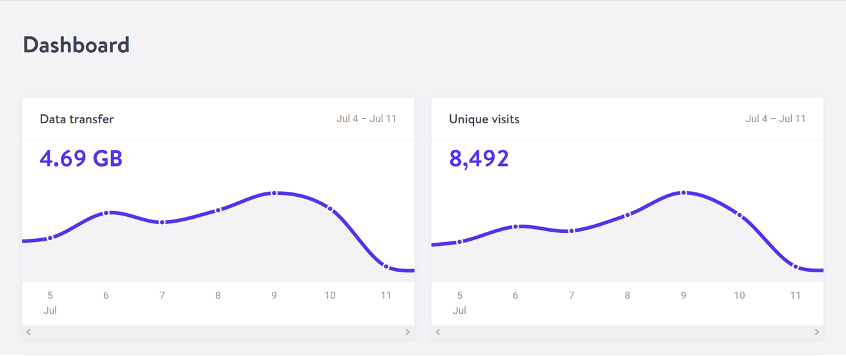

The most accurate way to calculate data transfer for a single visit for web pages is to measure the page weight, which is the first time a user visits the page in kilobytes. It’s fairly easy to measure using the developer tools in any modern web browser. Frequently, the statistics for the total data transfer of any web application are included in your web hosting account ( Fig. 2.1 ).

The nice thing about page weight as a metric is that it allows us to compare the efficiency of web pages on a level playing field without confusing the issue with constantly changing traffic volumes.

A large scope is required to reduce page weight. By early 2020, the median page weight was 1.97 MB for setups the HTTP Archive classifies as “desktop” and 1.77 MB for “mobile”, with desktop increasing 36 percent since January 2016 and mobile page weights nearly doubling in the same period ( Fig 2.2 ). Image files account for roughly half of this data transfer, making them the single biggest contributor to carbon emissions on a typical website.

History clearly shows us that our web pages can be smaller, if only we set our minds to it. While most technologies, including the underlying technology of the web like data centers and transmission networks, become more and more energy efficient, websites themselves become less effective as time goes on.

You may be aware of the idea of performance budgeting as a method for directing a project team to deliver faster user experiences. For example, we might specify that the website must load in a maximum of one second on a broadband connection and three seconds on a 3G connection. Performance budgets are upper limits rather than vague suggestions, much like speed limits while driving, so the goal should always be to come in within budget.

Designing for fast performance does often lead to reduced data transfer and emissions, but it isn’t always the case. Page weight and transfer size are more objective and reliable benchmarks for sustainable web design, but web performance is frequently more about the subjective perception of load times than it is about the underlying system’s true efficiency.

We can set a page weight budget in reference to a benchmark of industry averages, using data from sources like HTTP Archive. We can also use competitor page weight to compare the new website to the old one. For example, we might set a maximum page weight budget as equal to our most efficient competitor, or we could set the benchmark lower to guarantee we are best in class.

We could start looking at the transferability of our web pages for repeat visitors if we want to take it one step further. Although page weight for the first time someone visits is the easiest thing to measure, and easy to compare on a like-for-like basis, we can learn even more if we start looking at transfer size in other scenarios too. For instance, visitors who load the same page more frequently are likely to have a high percentage of the files cached in their browser, which means they don’t need to move all the files on subsequent visits. Likewise, a visitor who navigates to new pages on the same website will likely not need to load the full page each time, as some global assets from areas like the header and footer may already be cached in their browser. Moving beyond the first visit and measuring page weight budgets for scenarios beyond this level of detail can help us learn even more about how to optimize efficiency for users who regularly visit our pages.

Page weight budgets are easy to track throughout a design and development process. Although they don’t directly disclose carbon emissions and energy consumption data, they do provide a clear indicator of efficiency in comparison to other websites. And as transfer size is an effective analog for energy consumption, we can actually use it to estimate energy consumption too.

In summary, less data transfer leads to more energy efficiency, which is a crucial component of reducing web product carbon emissions. The more efficient our products, the less electricity they use, and the less fossil fuels need to be burned to produce the electricity to power them. However, as we’ll see next, it’s important to take into account the source of that electricity because all web products require some power.

Electricity’s carbon power

Regardless of energy efficiency, the level of pollution caused by digital products depends on the carbon intensity of the energy being used to power them. The term” carbon intensity” is used to describe how many grams of carbon are produced for every kilowatt-hour of electricity (gCO2/k Wh ). This varies widely, with renewable energy sources and nuclear having an extremely low carbon intensity of less than 10 gCO2/k Wh ( even when factoring in their construction ), whereas fossil fuels have very high carbon intensity of approximately 200–400 gCO2/k Wh.

The majority of electricity is produced by national or state grids, which combine energy from a variety of sources with different carbon intensity levels. The distributed nature of the internet means that a single user of a website or app might be using energy from multiple different grids simultaneously, a website user in Paris uses electricity from the French national grid to power their home internet and devices, but the website’s data center could be in Dallas, USA, pulling electricity from the Texas grid, while the telecoms networks use energy from everywhere between Dallas and Paris.

Although we have some control over where our projects are hosted, we do not have complete control over the energy supply of web services. With a data center using a significant proportion of the energy of any website, locating the data center in an area with low carbon energy will tangibly reduce its carbon emissions. This user-provided data is reported and mapped by Danish startup Tomorrow, and a look at their map demonstrates how, for instance, choosing a data center in France will result in significantly lower carbon emissions than choosing a data center in the Netherlands ( Fig. 2.3 ).

However, we don’t want to move our servers too far away from our users because it requires energy to transmit data through the telecom’s networks, and the more energy is used. Just like food miles, we can think of the distance from the data center to the website’s core user base as “megabyte miles” —and we want it to be as small as possible.

We can use website analytics to determine the country, state, or even city where our core user group is located and measure the distance from that location to the data center used by our hosting company by using the distance itself as a benchmark. This will be a somewhat fuzzy metric as we don’t know the precise center of mass of our users or the exact location of a data center, but we can at least get a rough idea.

For instance, if a website is hosted in London but the main audience is on the United States ‘ West Coast, we could look up the travel distance between London and San Francisco, which is 5,300 miles. That’s a long way! We can see how hosting it somewhere in North America, ideally on the West Coast, would significantly shorten the distance and the amount of energy needed to transmit the data. In addition, locating our servers closer to our visitors helps reduce latency and delivers better user experience, so it’s a win-win.

Reverting it to carbon emissions

If we combine carbon intensity with a calculation for energy consumption, we can calculate the carbon emissions of our websites and apps. The method my team developed converts the amount of electricity transferred when loading a web page into a CO2 figure ( Fig. 2.4), and then converts that data into a figure for the tool. It also factors in whether or not the web hosting is powered by renewable energy.

The Energy and Emissions Worksheet that comes with this book teaches you how to take it one step further and tailor the data more precisely to the unique aspects of your project.

With the ability to calculate carbon emissions for our projects, we could even set up carbon budgets as well. CO2 is not a metric commonly used in web projects, we’re more familiar with kilobytes and megabytes, and can fairly easily look at design options and files to assess how big they are. Although translating that into carbon adds a layer of abstraction that isn’t as intuitive, carbon budgets do focus our minds on the main thing we’re trying to reduce, which supports the main goal of sustainable web design: reducing carbon emissions.

Browser Energy

Transfer of data might be the simplest and most complete analog for energy consumption in our digital projects, but by giving us one number to represent the energy used in the data center, the telecoms networks, and the end user’s devices, it can’t offer us insights into the efficiency in any specific part of the system.

One part of the system we can look at in more detail is the energy used by end users ‘ devices. The computational load is increasingly shifting from the data center to users ‘ devices, whether they are phones, tablets, laptops, desktops, or even smart TVs, as front-end web technologies advance. Modern web browsers allow us to implement more complex styling and animation on the fly using CSS and JavaScript. Additionally, JavaScript libraries like Angular and React make it possible to create applications where the” thinking” process is performed partially or completely in the browser.

All of these advances are exciting and open up new possibilities for what the web can do to serve society and create positive experiences. However, more energy is used by the user’s devices as a result of the user’s web browser’s increased computation. This has implications not just environmentally, but also for user experience and inclusivity. Applications that put a lot of processing power on a user’s device unintentionally make them use older, slower devices and make their phones and laptops ‘ batteries discharge more quickly. Furthermore, if we build web applications that require the user to have up-to-date, powerful devices, people throw away old devices much more frequently. This not only hurts the environment, but it also places a disproportionate financial burden on society’s poorest.

In part because the tools are limited, and partly because there are so many different models of devices, it’s difficult to measure website energy consumption on end users ‘ devices. The Energy Impact monitor inside the developer console of the Safari browser is one of the tools we currently have ( Fig. 2.5 ).

You know when your computer’s cooling fans start spinning so frantically that you suspect it might take off when you load a website? That’s essentially what this tool is measuring.

It uses these figures to create an energy impact rating and shows the percentage of CPU used and how long the CPU used when loading the web page last. It doesn’t give us precise data for the amount of electricity used in kilowatts, but the information it does provide can be used to benchmark how efficiently your websites use energy and set targets for improvement.