Some wealthy runners had come to the conclusion that it was impossible to run a mile in less than four hours in the 1950s. Riders had been attempting it since the later 19th century and were beginning to draw the conclusion that the human body just wasn’t built for the job.

But on May 6, 1956, Roger Bannister caught all off guard. It was a cold, damp morning in Oxford, England—conditions no one expected to give themselves to record-setting—and but Bannister did really that, running a mile in 3: 59.4 and becoming the first people in the history books to run a mile in under four hours.

The world today knew that the four-minute hour was possible thanks to this change in the standard. Bannister’s history lasted just forty-six days, when it was snatched aside by American sprinter John Landy. Therefore, in the same race, three athletes managed to cross the four-minute challenge up. Since therefore, over 1, 400 walkers have actually run a mile in under four days, the current document is 3: 43.13, held by Moroccan performer Hicham El Guerrouj.

We accomplish a lot more when we think something is possible, and we only think it can be done when we see someone else doing it after all. As for man running speed, we also think there are the strictest requirements for how a website should do.

Establishing requirements for a green website

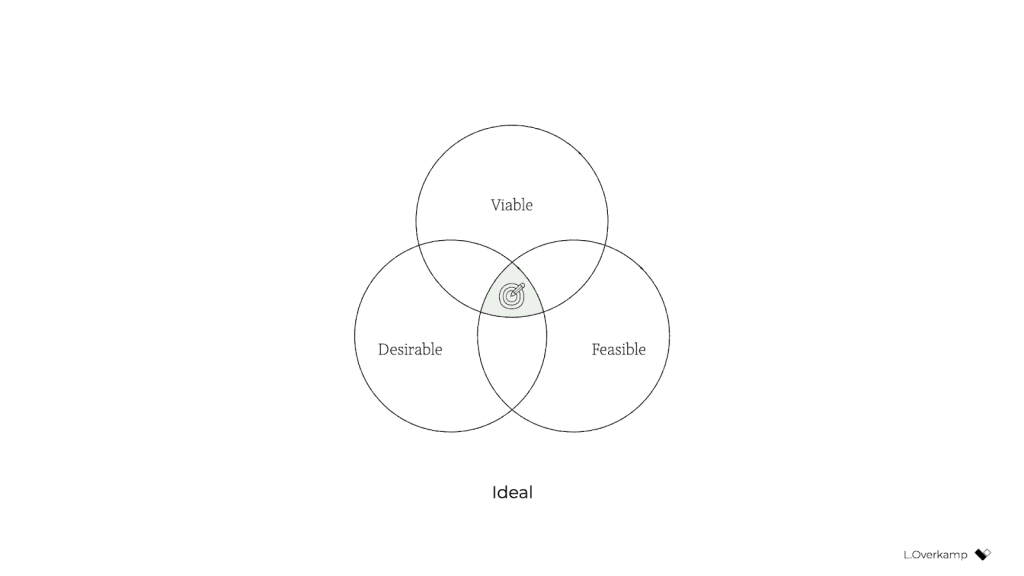

The key indicators of climate performance in most big sectors are pretty well established, such as power per square metre for homes and miles per gallon for cars. The tools and methods for calculating those measures are standardized as well, which keeps everyone on the same site when doing economic evaluations. But, we are not required to follow any specific environmental standards in the world of websites and apps, and we have only recently developed the tools and methods to do so.

The main objective in green web layout is to reduce carbon emissions. However, it’s nearly impossible to accurately assess the amount of CO2 that a website merchandise produces. We can’t measure the pollutants coming out of the exhaust valves on our devices. Our sites produce far-away, invisible, and unremarkable emissions when they leave fuel and gas-burning power plants. We have no way to track the particles from a website or app up to the power station where the light is being generated and really know the exact amount of house oil produced. So what do we accomplish then?

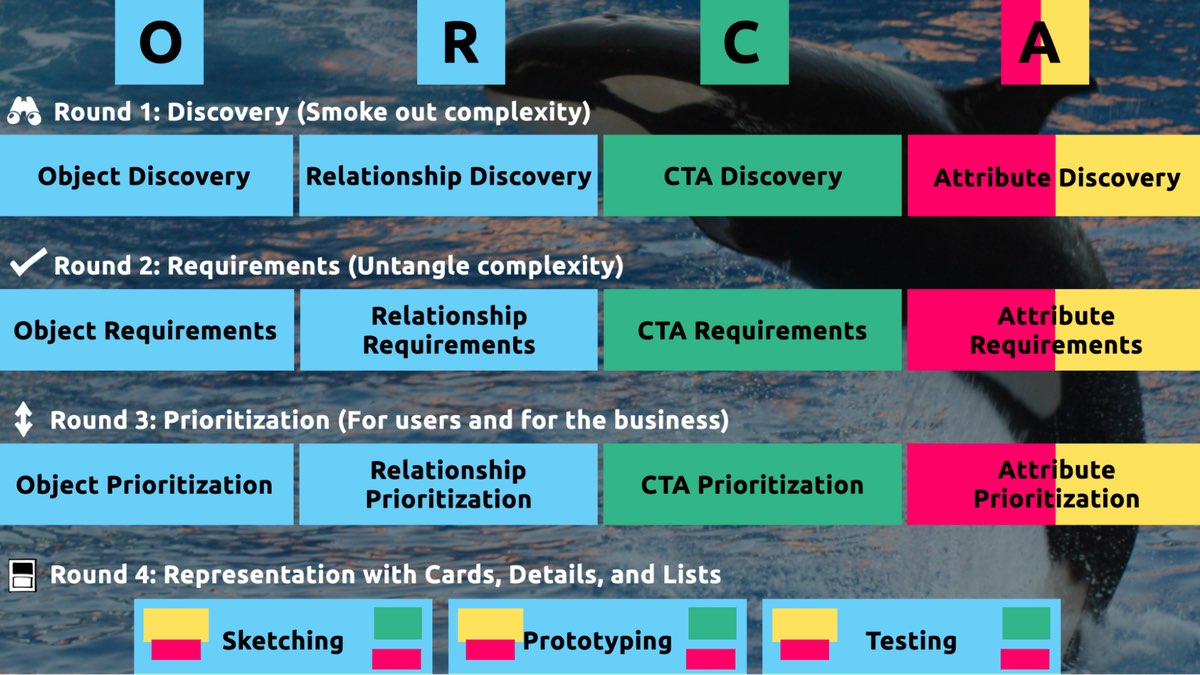

If we can‘t measure the actual carbon emissions, then we need to get what we can estimate. The following are the main elements that could be used as measures of coal emissions:

- Transfer of data

- Electricity’s coal power

Let’s take a look at how we can use these indicators to calculate the energy use, and in turn the carbon footprint, of the sites and web applications we create.

Transfer of data

Most researchers use kilowatt-hours per gigabyte (k Wh/GB ) as a metric of energy efficiency when measuring the amount of data transferred over the internet when a website or application is used. This serves as a wonderful example of how much energy is consumed and how much carbon is released. As a rule of thumb, the more files transferred, the more electricity used in the data center, telecoms systems, and end users products.

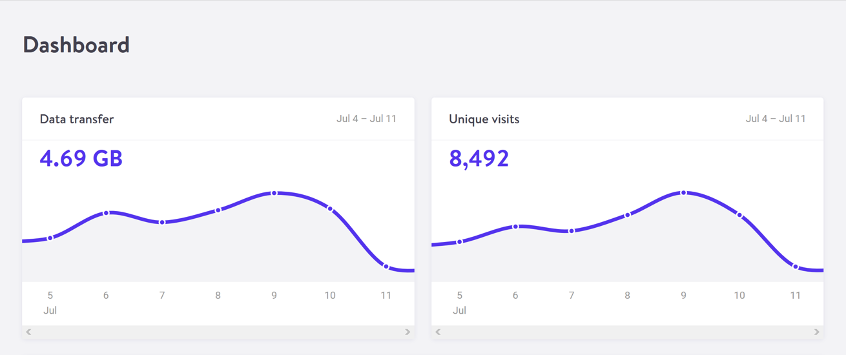

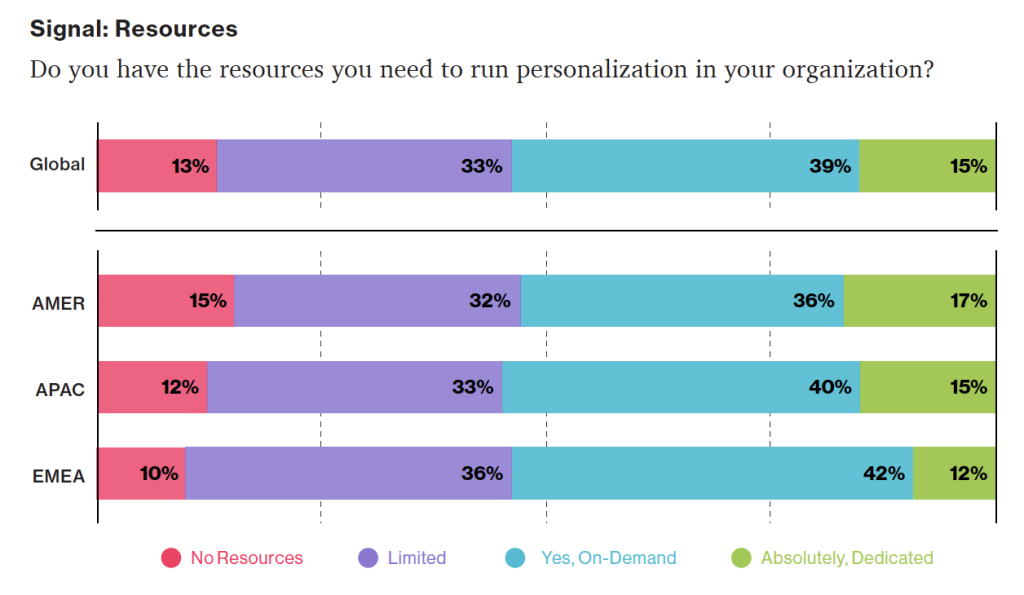

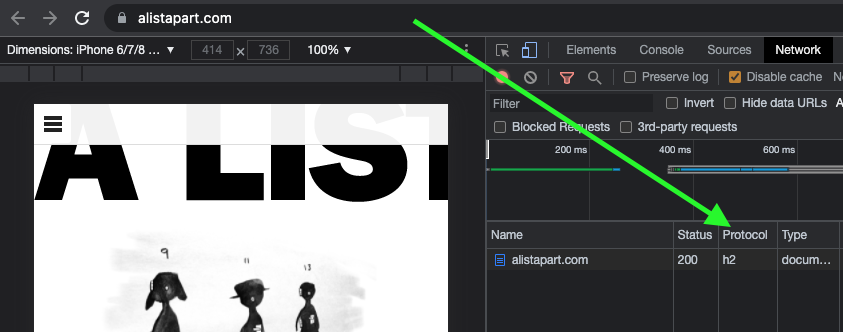

The most accurate way to calculate data transfer for a single visit for web pages is to measure the page weight, which is the first time a user visits the page in kilobytes. It’s fairly easy to measure using the developer tools in any modern web browser. Frequently, the statistics for the total data transfer of any web application are included in your web hosting account ( Fig. 2.1 ).

The nice thing about page weight as a metric is that it allows us to compare the efficiency of web pages on a level playing field without confusing the issue with constantly changing traffic volumes.

A large scope is necessary to reduce page weight. By early 2020, the median page weight was 1.97 MB for setups the HTTP Archive classifies as “desktop” and 1.77 MB for “mobile”, with desktop increasing 36 percent since January 2016 and mobile page weights nearly doubling in the same period ( Fig 2.2 ). Image files account for roughly half of this data transfer, making them the single biggest contributor to carbon emissions on the typical website.

History clearly shows us that our web pages can be smaller, if only we set our minds to it. While the majority of technologies, including the web’s underlying technology like data centers and transmission networks, become more and more energy-efficient, websites themselves become less effective as time goes on.

You may be aware of the idea of performance budgeting as a method for directing a project team to deliver faster user experiences. For example, we might specify that the website must load in a maximum of one second on a broadband connection and three seconds on a 3G connection. Performance budgets are upper limits rather than vague suggestions, much like speed limits while driving, so the goal should always be to come in within budget.

Designing for fast performance does often lead to reduced data transfer and emissions, but it isn’t always the case. Page weight and transfer size are more objective and reliable benchmarks for sustainable web design, but web performance is frequently more about the subjective perception of load times than it is about the underlying system’s true efficiency.

We can set a page weight budget in reference to a benchmark of industry averages, using data from sources like HTTP Archive. We can also use competitor page weights and the website’s current layout to compare it to. For example, we might set a maximum page weight budget as equal to our most efficient competitor, or we could set the benchmark lower to guarantee we are best in class.

We could start looking at the transferability of our web pages for repeat visitors if we want to take it one step further. Although page weight for the first time someone visits is the easiest thing to measure, and easy to compare on a like-for-like basis, we can learn even more if we start looking at transfer size in other scenarios too. For instance, visitors who load the same page more frequently will likely have a high percentage of the files cached in their browser, which means they won’t need to move all the files on subsequent visits. Likewise, a visitor who navigates to new pages on the same website will likely not need to load the full page each time, as some global assets from areas like the header and footer may already be cached in their browser. We can learn even more about how to optimize efficiency for users who regularly visit our pages by measuring transfer size at this next level of detail, which will also enable us to establish page weight budgets for situations that extend beyond the initial visit.

Page weight budgets are easy to track throughout a design and development process. Although they don’t directly disclose their data on energy consumption and carbon emissions, they do provide a clear indicator of efficiency in comparison to other websites. And as transfer size is an effective analog for energy consumption, we can actually use it to estimate energy consumption too.

In summary, less data transfer leads to more energy efficiency, a crucial component of reducing web product carbon emissions. The more efficient our products, the less electricity they use, and the less fossil fuels need to be burned to produce the electricity to power them. However, as we’ll see next, it’s important to take into account the source of that electricity because all web products require some.

Electricity’s coal power

Regardless of energy efficiency, the level of pollution caused by digital products depends on the carbon intensity of the energy being used to power them. The term” carbon intensity” is used to describe how many grams of carbon are produced for every kilowatt-hour of electricity (gCO2/k Wh ). This varies widely, with renewable energy sources and nuclear having an extremely low carbon intensity of less than 10 gCO2/k Wh ( even when factoring in their construction ), whereas fossil fuels have very high carbon intensity of approximately 200–400 gCO2/k Wh.

The majority of electricity is produced by national or state grids, where energy from a variety of sources is combined with various levels of carbon intensity. The distributed nature of the internet means that a single user of a website or app might be using energy from multiple different grids simultaneously, a website user in Paris uses electricity from the French national grid to power their home internet and devices, but the website’s data center could be in Dallas, USA, pulling electricity from the Texas grid, while the telecoms networks use energy from everywhere between Dallas and Paris.

Although we have some control over where our projects are hosted, we do not have complete control over the energy supply of web services. With a data center using a significant proportion of the energy of any website, locating the data center in an area with low carbon energy will tangibly reduce its carbon emissions. This user-provided data is reported and mapped by Danish startup Tomorrow, and a look at their map demonstrates how, for instance, choosing a data center in France will result in significantly lower carbon emissions than choosing a data center in the Netherlands ( Fig. 2.3 ).

Having said that, we don’t want to locate our servers too far away from our users; however, it takes energy to transmit data through the telecom’s networks, and the more energy is used, the further the data travels. Just like food miles, we can think of the distance from the data center to the website’s core user base as “megabyte miles” —and we want it to be as small as possible.

We can use website analytics to determine the country, state, or even city where our core user group is located and measure the distance between that location and the data center that our hosting company uses as a benchmark. This will be a somewhat fuzzy metric as we don’t know the precise center of mass of our users or the exact location of a data center, but we can at least get a rough idea.

For instance, if a website is hosted in London but the main audience is on the United States ‘ West Coast, we could calculate the distance between San Francisco and London, which is 5,300 miles. That’s a long way! We can see how significantly lessening the distance and energy needed to transmit the data would be if it was hosted somewhere in North America, ideally on the West Coast. In addition, locating our servers closer to our visitors helps reduce latency and delivers better user experience, so it’s a win-win.

Reverting it to carbon emissions

If we combine carbon intensity with a calculation for energy consumption, we can calculate the carbon emissions of our websites and apps. The method my team developed converts the data transferred over wire when loading a website into a CO2 figure ( Fig. 2.4), calculating the associated electricity, and then converting that data into a figure ( Fig. 2.4). It also factors in whether or not the web hosting is powered by renewable energy.

The Energy and Emissions Worksheet that comes with this book teaches you how to improve it and tailor the data more appropriately to your project’s unique features.

We could even expand our page weight budget by establishing carbon budgets as well with the ability to calculate carbon emissions for our projects. CO2 is not a metric commonly used in web projects, we’re more familiar with kilobytes and megabytes, and can fairly easily look at design options and files to assess how big they are. Although translating that into carbon adds a layer of abstraction that isn’t as intuitive, carbon budgets do focus our minds on the main thing we’re trying to reduce, and this is in line with the main goal of sustainable web design: reducing carbon emissions.

Browser Energy

Transfer of data might be the simplest and most complete analog for energy consumption in our digital projects, but by giving us one number to represent the energy used in the data center, the telecoms networks, and the end user’s devices, it can’t offer us insights into the efficiency in any specific part of the system.

One part of the system we can look at in more detail is the energy used by end users ‘ devices. The computational burden is increasingly shifting from the data center to the users ‘ devices, whether they are smart TVs, tablets, laptops, phones, tablets, laptops, or other front-end web technologies. Modern web browsers allow us to implement more complex styling and animation on the fly using CSS and JavaScript. Additionally, JavaScript libraries like Angular and React make it possible to create applications where the” thinking” process is performed either partially or completely in the browser.

All of these advances are exciting and open up new possibilities for what the web can do to serve society and create positive experiences. However, more data is processed in a web browser, which means more energy is used by the user’s devices. This has implications not just environmentally, but also for user experience and inclusivity. Applications that put a lot of processing power on a user’s device unintentionally exclude those who have older, slower devices and make the batteries on phones and laptops drain more quickly. Furthermore, if we build web applications that require the user to have up-to-date, powerful devices, people throw away old devices much more frequently. This not only harms the environment, but it places a disproportionate financial burden on the poorest members of society.

In part because the tools are limited, and partly because there are so many different models of devices, it’s difficult to measure website energy consumption on end users ‘ devices. The Energy Impact monitor inside the developer console of the Safari browser is one of the tools we currently have ( Fig. 2.5 ).

You know when your computer’s cooling fans start spinning so frantically that you suspect it might take off when you load a website? That’s essentially what this tool is measuring.

It uses these figures to create an energy impact rating based on the percentage of CPU used and how long it took the web page to load. It doesn’t give us precise data for the amount of electricity used in kilowatts, but the information it does provide can be used to benchmark how efficiently your websites use energy and set targets for improvement.