The personalization space is real, between the dream of getting it right and the fear of it going wrong ( like when we encounter “persofails” in the spirit of a company that regularly asks regular people to buy more toilet seats ). It’s an particularly confusing place to be a modern professional without a map, a map, or a strategy.

Because successful personalization is so dependent on each group’s skill, technology, and market position, there are no Lonely Planet and some tour guides for those of you who want to personalize.

But you can ensure that your group has packed its carriers rationally.

There’s a DIY method to increase your chances for achievement. You’ll at least at least disarm your boss ‘ irrational exuberance. Before the group you’ll need to properly plan.

We refer to it as prepersonalization.

Behind the audio

Take into account the DJ have on Spotify, which was introduced last month.

We’re used to seeing the polished final outcome of a personalization have. A personal have had to be developed, budgeted, and given priority before the year-end prize, the making-of-backstory, or the behind-the-scenes success chest. Before any customisation have goes live in your product or service, it lives amid a delay of valuable ideas for expressing consumer experiences more automatically.

How do you decide where to position customisation wagers? How do you design regular interactions that didn’t journey up users or—worse—breed mistrust? We’ve found that for many well-known budgeted programs to support their continued investments, they initially required one or more workshops to join vital technologies users and stakeholders. Make yours count.

We’ve witnessed the same evolution up close with our clients, from big tech to fledgling startups. In our experiences with working on small and large personalization efforts, a program’s ultimate track record—and its ability to weather tough questions, work steadily toward shared answers, and organize its design and technology efforts—turns on how effectively these prepersonalization activities play out.

Effective workshops consistently separate successful future endeavors from unsuccessful ones, saving countless hours of time, resources, and overall well-being.

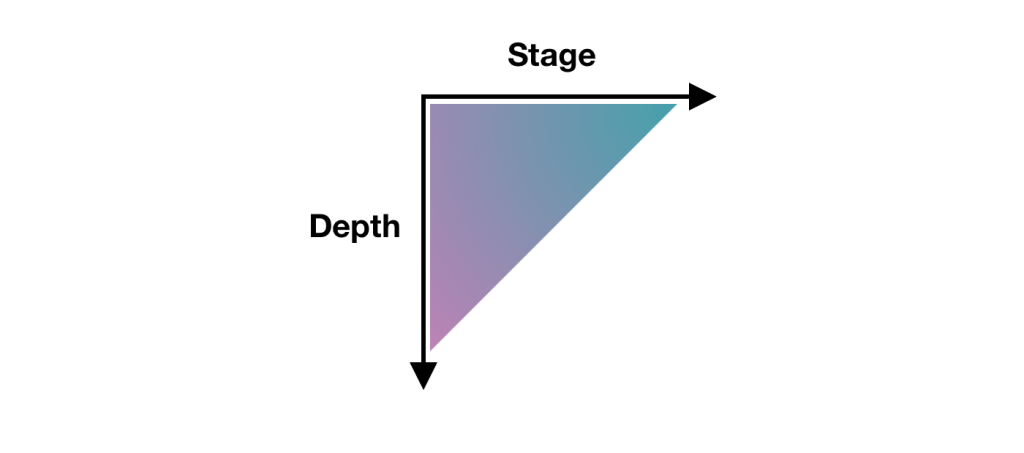

A personalization practice involves a multiyear effort of testing and feature development. It’s not a tech stack switch-flip. It’s best managed as a backlog that often evolves through three steps:

- customer experience optimization ( CXO, also known as A/B testing or experimentation )

- always-on automations ( whether rules-based or machine-generated )

- mature features or standalone product development ( such as Spotify’s DJ experience )

This is why we created our progressive personalization framework and why we’re field-testing an accompanying deck of cards: we believe that there’s a base grammar, a set of “nouns and verbs” that your organization can use to design experiences that are customized, personalized, or automated. You won’t require these cards. But we strongly recommend that you create something similar, whether that might be digital or physical.

Set the timer for the kitchen.

How long does it take to cook up a prepersonalization workshop? The evaluation activities that we suggest include can last for a number of weeks ( and frequently do ). For the core workshop, we recommend aiming for two to three days. Here are a summary of our broad approach and information on the most crucial first-day activities.

The full arc of the wider workshop is threefold:

- Kickstart: This specifies the terms of engagement as you concentrate on the potential, the readiness and drive of your team, and your leadership.

- Plan your work: This is the heart of the card-based workshop activities where you specify a plan of attack and the scope of work.

- Work your plan: This stage essentially entails creating a competitive environment in which team members can individually present their own pilots that each contain a proof-of-concept project, its business case, and its operating model.

Give yourself at least a day, split into two large time blocks, to power through a concentrated version of those first two phases.

Kickstart: Apt your appetite

We call the first lesson the “landscape of connected experience“. It looks at the possibilities for personalization at your company. A connected experience, in our parlance, is any UX requiring the orchestration of multiple systems of record on the backend. This might be a marketing-automation platform combined with a content-management system. It could be a digital-asset manager combined with a customer-data platform.

Give examples of connected experience interactions that you admire, find familiar, or even dislike, as examples of consumer and business-to-business examples. This should cover a representative range of personalization patterns, including automated app-based interactions ( such as onboarding sequences or wizards ), notifications, and recommenders. These cards contain a catalog, which we have. Here’s a list of 142 different interactions to jog your thinking.

It’s all about setting the tone. What are the possible paths for the practice in your organization? Here’s a long-form primer and a strategic framework for a broad perspective.

Assess each example that you discuss for its complexity and the level of effort that you estimate that it would take for your team to deliver that feature ( or something similar ). We break down connected experiences into five categories in our cards: functions, features, experiences, complete products, and portfolios. Size your own build here. This will help to draw attention to the benefits of ongoing investment as well as the difference between what you deliver right now and what you want to deliver in the future.

Next, have your team plot each idea on the following 2×2 grid, which lays out the four enduring arguments for a personalized experience. This is crucial because it emphasizes how personalization can affect your own methods of working as well as your external customers. It’s also a reminder ( which is why we used the word argument earlier ) of the broader effort beyond these tactical interventions.

Each team member should decide where their focus should be placed for your product or service. Naturally, you can’t prioritize all of them. Here, the goal is to demonstrate how various departments may view their own advantages over the effort, which can be different from one department to the next. Documenting your desired outcomes lets you know how the team internally aligns across representatives from different departments or functional areas.

The third and final kickstart activity is about filling in the personalization gap. Is your customer journey well documented? Will ensuring data and privacy is a major challenge too much? Do you have content metadata needs that you have to address? ( We’re pretty sure you do; it’s just a matter of acknowledging the magnitude of that need and finding a solution. ) In our cards, we’ve noted a number of program risks, including common team dispositions. For instance, our Detractor card lists six protracted behavior that is harmful to the development of our country.

Effectively collaborating and managing expectations is critical to your success. Consider the potential obstacles to your progress in the future. Press the participants to name specific steps to overcome or mitigate those barriers in your organization. According to research, personalization initiatives face a number of common obstacles.

At this point, you’ve hopefully discussed sample interactions, emphasized a key area of benefit, and flagged key gaps? You’re all set to go on, good.

Hit that test kitchen

Next, let’s take a look at what you’ll need to create personalization recipes. Personalization engines, which are robust software suites for automating and expressing dynamic content, can intimidate new customers. They give you a variety of options for how your organization can conduct its activities because of their broad and potent capabilities. This presents the question: Where do you begin when you’re configuring a connected experience?

The key here is to avoid treating the installed software ( as one of our client executives humorously put it ) like some sort of dream kitchen. These software engines are more like test kitchens where your team can begin devising, tasting, and refining the snacks and meals that will become a part of your personalization program’s regularly evolving menu.

Over the course of the workshop, the ultimate menu of the prioritized backlog will come together. And creating “dishes” is the way that you’ll have individual team stakeholders construct personalized interactions that serve their needs or the needs of others.

The dishes will be made using recipes that have predetermined ingredients.

Verify your ingredients

Like a good product manager, you’ll make sure you have everything you need to make your desired interaction ( or that you can figure out what needs to be added to your pantry ) and that you validate with the right stakeholders present. These ingredients include the audience that you’re targeting, content and design elements, the context for the interaction, and your measure for how it’ll come together.

This doesn’t just involve identifying requirements. Documenting your personalizations as a series of if-then statements lets the team:

- compare findings to a common strategy for developing features, similar to how artists paint with the same color palette,

- specify a consistent set of interactions that users find uniform or familiar,

- and establish parity among performance indicators and key performance indicators as well.

This helps you streamline your designs and your technical efforts while you deliver a shared palette of core motifs of your personalized or automated experience.

Create a recipe.

What ingredients are important to you? Consider the construct of a who-what-when-why

- Who are your key audience segments or groups?

- What content, what design elements, and under what circumstances will you give them?

- And for which business and user benefits?

Five years ago, we created these cards and card categories. We regularly play-test their fit with conference audiences and clients. And there are still fresh possibilities. But they all follow an underlying who-what-when-why logic.

In the cards in the accompanying photo below, you can typically follow along with right to left in three examples of subscription-based reading apps.

- Nurture personalization: When a guest or an unknown visitor interacts with a product title, a banner or alert bar appears that makes it easier for them to encounter a related title they may want to read, saving them time.

- Welcome automation: An email is sent when a new user registers to highlight the breadth of the content catalog and convert them to happy subscribers.

- Winback automation: Before their subscription lapses or after a recent failed renewal, a user is sent an email that gives them a promotional offer to suggest that they reconsider renewing or to remind them to renew.

A good preworkshop activity might be to consider a first draft of what these cards might be for your organization, though we’ve also found that cocreating the recipes themselves can sometimes help this process. Start with a set of blank cards, and begin labeling and grouping them through the design process, eventually distilling them to a refined subset of highly useful candidate cards.

The later stages of the workshop could be characterized as moving from focusing on a cookbook to a more nuanced customer-journey mapping. Individual” cooks” will pitch their recipes to the team, using a common jobs-to-be-done format so that measurability and results are baked in, and from there, the resulting collection will be prioritized for finished design and delivery to production.

Better architecture is required for better kitchens.

Simplifying a customer experience is a complicated effort for those who are inside delivering it. Beware of anyone who contradicts your advice. With that being said,” Complicated problems can be hard to solve, but they are addressable with rules and recipes“.

A team overfitting: they aren’t designing with their best data, is what causes personalization to become a laugh line. Like a sparse pantry, every organization has metadata debt to go along with its technical debt, and this creates a drag on personalization effectiveness. For instance, your AI’s output quality is in fact impacted by your IA. Spotify’s poster-child prowess today was unfathomable before they acquired a seemingly modest metadata startup that now powers its underlying information architecture.

You can withstand the heat without a doubt.

Personalization technology opens a doorway into a confounding ocean of possible designs. Only a deliberate and cooperative approach will produce the desired outcome. So banish the dream kitchen. Instead, head to the test kitchen to save time, preserve job security, and avoid imagining the creative concepts that come from the doers in your organization. There are meals to serve and mouths to feed.

You have a better chance of lasting success and sound beginnings with this workshop framework. Wiring up your information layer isn’t an overnight affair. However, you’ll have solid ground for success if you use the same cookbook and the same recipes. We designed these activities to make your organization’s needs concrete and clear, long before the hazards pile up.

Your time well spent is being able to assess your unique situation and digital skills, despite the associated costs associated with investing in this kind of technology and product design. Don’t squander it. The pudding is the proof, as they say.