Here’s the key. To find stakeholders to determine high-risk assumptions and buried complexity, you must first convince them to do so so that they become just as motivated as you are to receive user-response. Generally, you need to make them think it’s their plan.

By bringing the group up around two straightforward issues, I’ll show you how to collectively introduce alignment and cracks in the group’s shared understanding in this article.

- What are the items?

- What are the associations between those things?

A cross between panel design and analysis

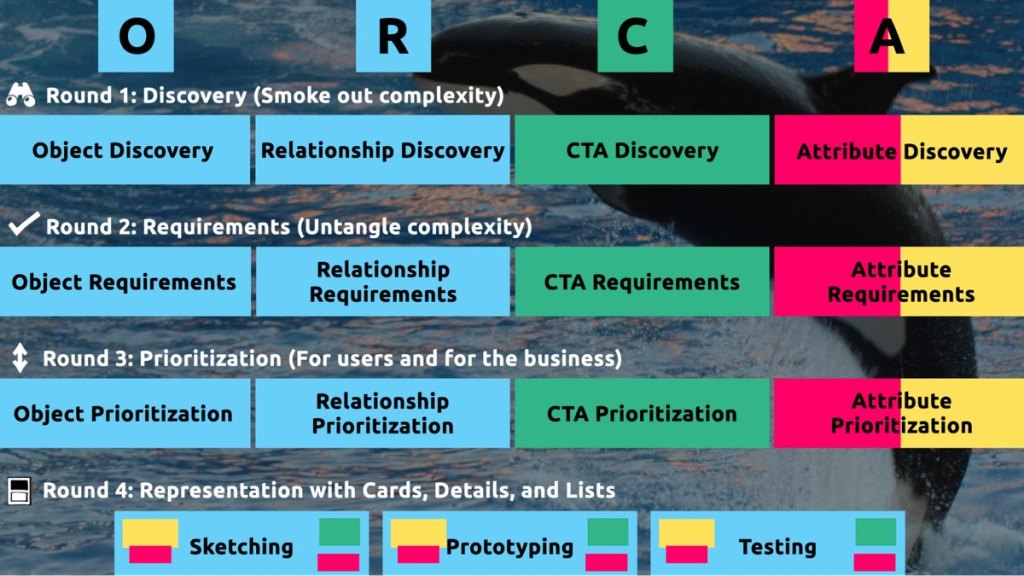

These two issues correlate to the first two methods of the ORCA approach, which may be your new best friend when it comes to reducing speculation. What’s ORCA, then? Glad you asked.

ORCA stands for Things, Relationships, CTAs, and Values, and it outlines a process for creating good object-oriented user experience. My style philosophy is based on object-oriented UX. ORCA is an iterative strategy for synthesizing person study into an elegant fundamental foundation to help monitor and conversation design. My work as a UX designer has become more creative, productive, successful, fun, proper, and meaningful thanks to OOUX and ORCA.

The ORCA process has four iterative rounds and a whopping fifteen steps. In each round we get more clarity on our Os, Rs, Cs, and As.

I occasionally refer to ORCA as a “garbage in, garbage out” procedure. To ensure that the testable prototype produced in the final round actually tests well, the process needs to be fed by good research. The ORCA process’s beginning serves another purpose: it enables you to justify the need for research if you don’t have a lot of it.

In other words, the ORCA process serves as a gauntlet between research and design. You can gracefully ride the killer whale from research to design with good research. But without good research, the process effectively spits you back into research and with a cache of specific open questions.

Getting back in the same curiosity-boat

What gets us into trouble is not what we don’t know. It’s what we know for sure that just ain’t so.

Mark Twain

The first two steps of the ORCA process—Object Discovery and Relationship Discovery—shine a spotlight on the dark, dusty corners of your team’s misalignments and any inherent complexity that’s been swept under the rug. It starts to reveal what this timeless comic so skillfully demonstrates:

This is one reason why so many UX designers are frustrated in their job and why many projects fail. Every decision-maker is confident in their own mental picture, which is another reason why we frequently can’t sell research.

Once we expose hidden fuzzy patches in each picture and the differences between them all, the case for user research makes itself.

However, how we go about doing this is crucial. However much we might want to, we can’t just tell everyone,” YOU ARE WRONG”! Instead, we need to facilitate and guide our team members to self-identify holes in their picture. When stakeholders accept responsibility for their beliefs and understanding gaps, BAM! Suddenly, UX research is not such a hard sell, and everyone is aboard the same curiosity-boat.

Let’s say your users are physicians. And you have no idea how doctors use the system you are tasked with redesigning.

You might try to sell research by honestly saying:” We need to understand doctors better! What bothers them the most? How do they use the current app”? Here’s the issue with that, though. Those questions are vague, and the answers to them don’t feel acutely actionable.

Instead, you want your stakeholders themselves to ask super-specific questions. This conversation is more similar to what you need to facilitate. Let’s listen in:

” Wait a sec, how frequently do doctors share patients?” Does a patient in this system have primary and secondary doctors”?

” Can a patient even have more than one primary doctor”?

Is it a “primary doctor” or “primary caregiver” ?Can’t that position be considered a nurse practitioner?

” No, caregivers are something else… That’s the patient’s family contacts, right”?

” So are caregivers in this redesign’s scope”?

” Yeah, because if a caregiver is present at an appointment, the doctor needs to note that. Like, tag the caregiver on the note… Or on the appointment”?

We are currently traveling somewhere. Do you see how powerful it can be getting stakeholders to debate these questions themselves? The diabolical goal is to gently and diplomatically shake their confidence.

When these kinds of questions bubble up collaboratively and come directly from the mouths of your stakeholders and decision-makers, suddenly, designing screens without knowing the answers to these questions seems incredibly risky, even silly.

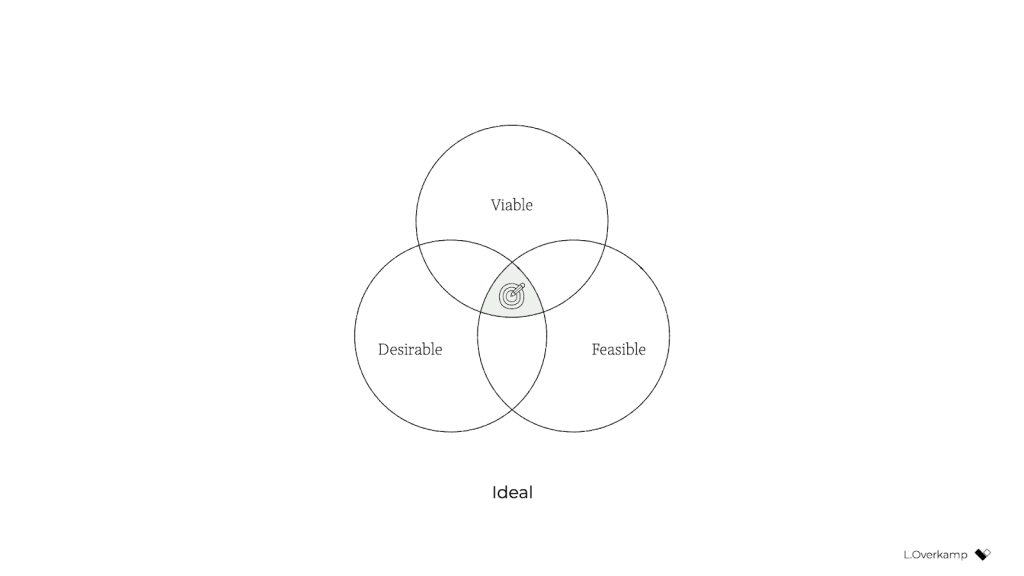

If we create software without understanding the real-world information environment of our users, we will likely create software that does not align to the real-world information environment of our users. And most likely as a result, this software product will become more confusing, complicated, and unintuitive.

The two questions

But how do we approach these types of contentious inquiries diplomatically, effectively, collaboratively, and reliably?

We can do this by starting with those two big questions that align to the first two steps of the ORCA process:

- What are the items?

- What are the associations between those things?

In practice, getting to these answers is easier said than done. I’m going to demonstrate how an Object Definition Workshop can be organized using these two straightforward questions. During this workshop, these” seed” questions will blossom into dozens of specific questions and shine a spotlight on the need for more user research.

Work in preparation: Noun for foraging

In the next section, I’ll show you how to run an Object Definition Workshop with your stakeholders ( and entire cross-functional team, hopefully ). But first, you need to do some prep work.

In essence, look for nouns that are specific to the subject matter of your project’s business or industry and use at least a few sources. I call this noun foraging.

Just a few excellent noun foraging sources can be found here:

- the product’s marketing site

- the product’s competitors ‘ marketing sites ( competitive analysis, anyone? )

- the already-existing product ( check the labels )!

- user interview transcripts

- notes from interviews with stakeholders or vision documents from stakeholders

Put your detective hat on, my dear Watson. Get resourceful and leverage what you have. Use those if all you have is a marketing website, some screenshots of the current legacy system, and access to customer service chat logs.

As you peruse these sources, watch for the nouns that are used over and over again, and start listing them ( preferably on blue sticky notes if you’ll be creating an object map later! ).

You’ll want to focus on nouns that might represent objects in your system. If you are having trouble determining if a noun might be object-worthy, remember the acronym SIP and test for:

- Structure

- Instances

- Purpose

Consider, for instance, a library app. Is “book” an object?

Can you think of a few attributes for this potential object? Title, author, publish date … Yep, it has structure. Check!

What are some instances of this conceivable “book” object? Can you name a few? Check out The Alchemist, Ready Player One, and Everybody Poops!

Purpose: why is this object important to the users and business? Well, “book” is what our library client is providing to people and books are why people come to the library … Check, check, check!

Concentrate on capturing the nouns with SIP as you go noun foraging. Avoid capturing components like dropdowns, checkboxes, and calendar pickers—your UX system is not your design system! Components are just the packaging for objects—they are a means to an end. No one is using your dropdown to play in your digital space! They are coming for the VALUABLE THINGS and what they can do with them. We are attempting to identify those things or objects.

Let’s say we work for a startup disrupting the email experience. This is how I’d start my noun foraging.

I’d like to take a look at my own email client, which turns out to be Gmail. I’d then look at Outlook and the new HEY email. I would examine Hotmail, Yahoo, and even Basecamp and other’email replacers’. I’d read some articles, reviews, and forum threads where people are complaining about email. While doing all this, I would look for and write down the nouns.

( Before moving on, feel free to explore this hypothetical product’s potential and then scroll down to see how closely our lists correspond. Just don’t get lost in your own emails! Rejoice to me again!

Drumroll, please…

Here are a few nouns I came up with during my noun foraging:

- email message

- thread

- contact

- client

- rule/automation

- email address that is not a contact?

- contact groups

- attachment

- Google doc file / other integrated file

- newsletter? ( HEY views this in a different way )

- saved responses and templates

Scan your list of nouns and pick out words that you are completely clueless about. It might be a client or automation in our email example. Do as much homework as you can before your session with stakeholders: google what’s googleable. But other terms might be so specific to the product or domain that you need to have a conversation about them.

Aside: Here are some real nouns that I needed to clarify while working on my own projects in the past.

- Record Locator

- Incentive House

- Augmented Line Item

- Curriculum-Based Measurement Probe

A list of nouns that represent potential objects and a short list of nouns that need to be further defined are really all you need to prepare for the workshop session.

Facilitate an Object Definition Workshop

You could start your workshop by doing noun foraging; this can be done in concert. If you have five people in the room, pick five sources, assign one to every person, and give everyone ten minutes to find the objects within their source. When the time’s up, come together and find the overlap. Here, affinity mapping is your friend!

If your team is short on time and might be reluctant to do this kind of grunt work ( which is usually the case ) do your own noun foraging beforehand, but be prepared to show your work. I enjoy showing screenshots of documents and screens that have all the highlighted nouns. Bring the artifacts of your process, and start the workshop with a five-minute overview of your noun foraging journey.

HOT TIP: before jumping into the workshop, frame the conversation as a requirements-gathering session to help you better understand the scope and details of the system. You don’t need to tell them you‘re looking for gaps in the team’s understanding so that you can demonstrate the need for more user research; that will be our little secret. Instead, go into the session optimistically, as if your knowledgeable stakeholders and PMs and biz folks already have all the answers.

Let the whack-a-mole question then start.

1. What is this thing?

Want to have some genuine fun? At the beginning of your session, ask stakeholders to privately write definitions for the handful of obscure nouns you might be uncertain about. Then, have everyone present their cards at once, and see if there are any (you will ) definitions. This is gold for exposing misalignment and starting great conversations.

As your discussion unfolds, capture any agreed-upon definitions. And when uncertainty appears, quietly ( but clearly ) begin a parking lot with “open questions.” � �

Here’s a fantastic follow-up to solidify definitions:

2. Do our users know what these things are? What is the name of this object?

Stakeholder 1: They probably call email clients “apps”. But I’m not certain.

Stakeholder 2: Automations are often called “workflows”, I think. Or, maybe users think workflows are something different.

Ask the group to decide whether to use only that term in the near future if it becomes more user-friendly. This way, the team can better align to the users ‘ language and mindset.

Okay, let’s get to the next part.

If you have two or more objects that seem to overlap in purpose, ask one of these questions:

3. Do these two things exist the same? Or are these different? How are they different if they are different from one another?

You: Is a saved response the same as a template?

Stakeholder 1: Yes! Absolutely.

Stakeholder 2: I don’t think so… A saved response is text with links and variables, but a template is more about the look and feel, like default fonts, colors, and placeholder images.

Continue to expand your expanding glossary of terms. And continue to capture areas of uncertainty in your “open questions” parking lot.

If you successfully determine that two similar things are, in fact, different, here’s your next follow-up question:

4. What’s the relationship between these objects?

You: Are saved responses and templates in any way connected to each other?

Stakeholder 3: Yeah, a template can be applied to a saved response.

You, always with the follow-ups: When is the template applied to a saved response? When the user is creating the saved response, does that occur? Or when they apply the saved response to an email? What is the process behind that?

Listen. Capture uncertainty. When the number of “open questions” reaches a critical mass, pause to begin asking questions of groups or individuals. Some questions might be for the dev team ( hopefully at least one developer is in the room with you ). One question might be specific for someone who was unable to attend the workshop. And many questions will need to be labeled “user”.

Do you see how we are building up to our UXR sales pitch?

5. Is this object in scope?

Your next query makes it easier for the team to concentrate on what your users are most interested in. You can simply ask,” Are saved responses in scope for our first release”?, but I’ve got a better, more devious strategy.

By now, you should have a list of clearly defined objects. Ask participants to arrange these items either in small breakout groups or independently according to their importance. Then, like you did with the definitions, have everyone reveal their sort order at once. Unsurprisingly, it’s not unusual for the VP to place something like” saved responses” at the top of the list while everyone else places it at the bottom. Try not to look too smug as you inevitably expose more misalignment.

I did this for a startup a few years ago. We displayed the three groups ‘ wildly different sort orders on the whiteboard.

The CEO nodded his head and said,” This is why we haven’t been able to move forward in two years.”

Admittedly, it’s tragic to hear that, but as a professional, it feels pretty awesome to be the one who facilitated a watershed realization.

Once you have a good idea of in-scope, clearly defined things, this is when you move on to doing more relationship mapping.

6. Create a visual representation of the objects ‘ relationships

We’ve already tried to figure out what two things are different, but this time, we wanted to ask the team about every possible relationship. For each object, ask how it relates to all the other objects. In what ways are the objects connected? Use your dependable boxes and arrows technique to make the connections all recognizable. Here, we are connecting our objects with verbs. I prefer to use simple statements like “has a” and “has many.”

This system modeling activity brings up all sorts of new questions:

- Can an attachment in a saved response be present?

- Can a saved response use a template? If so, can the recipient override the template if an email uses a saved response with a template?

- Do users want to see all the emails they sent that included a particular attachment? For example,” show me all the emails I sent with ProfessionalImage. attached .jpg I’ve changed my professional photo and I want to alert everyone to update it”.

Effective responses might come directly from the workshop participants. Great! Capture that new shared understanding. However, as uncertainty arises, keep adding new questions to your expanding parking lot.

Light the fuse

You’ve set up the floodgates strategically so that the explosives can be seen everywhere. Now you simply have to light the fuse and BOOM. Watch the buy-in for user research flooooow.

Have the group reflect on the list of open questions before the workshop ends. Make plans for getting answers internally, then focus on the questions that need to be brought before users.

Your final step is now. Take those questions you’ve compiled for user research and discuss the level of risk associated with NOT answering them. Ask, “if we design without an answer to this question, if we make up our own answer and we are wrong, how bad might that turn out”?

With this approach, we are making our decision-makers fight for user research because they themselves define questions as high-risk. Sorry, not sorry.

Your moment of truth is right now. With everyone in the room, ask for a reasonable budget of time and money to conduct 6–8 user interviews focused specifically on these questions.

HOT TIP: if you are new to UX research, please note that you’ll likely need to rephrase the questions that came up during the workshop before you present them to users. Make sure your questions are non-repeated and don’t force the user to choose any default responses.

Final words: Hold the screen design!

Seriously, if at all possible, if you never design screens again without first addressing these fundamental inquiries: what are the objects and how do they relate?

I promise you this: if you can secure a shared understanding between the business, design, and development teams before you start designing screens, you will have less heartache and save more time and money, and ( it almost feels like a bonus at this point! ) users will be more receptive to what you put out into the world.

I sincerely hope this will give you the time and money to spend talking to your users and getting a clear understanding of what you are designing before you begin creating screens. If you find success using noun foraging and the Object Definition Workshop, there’s more where that came from in the rest of the ORCA process, which will help prevent even more late-in-the-game scope tugs-of-war and strategy pivots.

Wish you the best of luck! Now go sell research!