The mobile-first style approach is great—it focuses on what really matters to the consumer, it’s well-practiced, and it’s been a popular style design for years. But developing your CSS mobile-first should also be fantastic, too…right?

Well, not necessarily. Classic mobile-first CSS development is based on the principle of overwriting style declarations: you begin your CSS with default style declarations, and overwrite and/or add new styles as you add breakpoints with min-width media queries for larger viewports (for a good overview see “What is Mobile First CSS and Why Does It Rock?”). But all those exceptions create complexity and inefficiency, which in turn can lead to an increased testing effort and a code base that’s harder to maintain. Admit it—how many of us willingly want that?

Mobile-first CSS may yet be the best option for your own projects, but you need to first determine whether it is appropriate in light of the physical design and user interactions you’re creating. To help you get started, here’s how I go about tackling the elements you need to watch for, and I’ll discuss some alternative remedies if mobile-first doesn’t seem to fit your job.

Benefits of mobile-first

Some of the points to enjoy with mobile-first CSS growth —and why it’s been the de facto growth strategy for thus long—make a lot of feeling:

Development order. One thing you definitely get from mobile-first is a great development hierarchy—you only focus on the cellular view and get developing.

Tried and tested. It’s a tried-and-true method that has worked for years because it solves a problem actually well.

Prioritizes the portable watch. The mobile view is the simplest and arguably the most significant because it covers all the crucial user journeys and frequently accounts for a higher proportion of user visits ( depending on the project ) ).

Inhibits desktop-centric growth. It can be tempting to first focus on the desktop perspective because enhancement is done using pc servers. No one wants to spend their time retrofitting a desktop-centric website to function on mobile devices, but thinking about smart right away keeps us from getting stuck later on!

Drawbacks of mobile-first

Model declarations can be set at higher breakpoints and therefore overwritten at higher breakpoints:

More difficulty. The more unneeded code you inherit from lower thresholds the higher up the target order you ascend.

Higher CSS precision. A school name declaration’s default style has then a higher specificity that has been returned to the browser’s default value. When you want to preserve the CSS candidates as simple as possible, this can cause a headache on massive projects.

Requires more analysis tests. All higher thresholds must be regression tested if modifications to CSS at a lower see ( such as adding a new style ) are required.

The browser can’t prioritize CSS downloads. At wider breakpoints, classic mobile-first min-width media queries don’t leverage the browser’s capability to download CSS files in priority order.

The issue of home value surpasses

There is no intrinsically wrong with overwriting principles; CSS was created to accomplish that. However, sharing incorrect values is counterproductive and can be burdensome and inadequate. When you have to replace styles to restore them to their defaults, which may cause issues after, especially if you are using a combination of bespoke CSS and energy classes, it can also lead to more style precision. A style with a higher specificity that has been reset won’t be able to be used with a utility class.

With this in mind, I’m developing CSS with a focus on the default values much more these days. Since there’s no specific order, and no chains of specific values to keep track of, this frees me to develop breakpoints simultaneously. I concentrate on finding common styles and isolating the specific exceptions in closed media query ranges (that is, any range with a max-width set).

This approach opens up some opportunities, as you can look at each breakpoint as a clean slate. If a component’s layout looks like it should be based on Flexbox at all breakpoints, it’s fine and can be coded in the default style sheet. However, if it appears that Grid is much better for large screens and Flexbox is much better for mobile, both can be accomplished entirely independently when the CSS is entered into closed media query ranges. Additionally, developing simultaneously requires you to have a thorough understanding of any given component in all breakpoints right away. This can help identify issues in the design more quickly during the development process. We don’t want to go down the rabbit hole while creating complex mobile components, only to discover that the desktop designs are just as complex and incompatible with the HTML we created for the mobile view!

Though this approach isn’t going to suit everyone, I encourage you to give it a try. There are plenty of tools out there to help with concurrent development, such as Responsively App, Blisk, and many others.

Having said that, I don’t feel the order itself is particularly relevant. If you like to work on one device at a time, are comfortable with focusing on the mobile view, and are familiar with the requirements for other breakpoints, then you should definitely follow the classic development order. The key is to find common styles and exceptions so that you can include them in the appropriate stylesheet, which is a manual tree-shaking procedure! Personally, I find this a little easier when working on a component across breakpoints, but that’s by no means a requirement.

Closed media query ranges in practice

In classic mobile-first CSS we overwrite the styles, but we can avoid this by using media query ranges. To illustrate the difference ( I’m using SCSS for brevity ), let’s assume there are three visual designs:

- smaller than 768

- from 768 to below 1024

- 1024 and anything larger

Take a simple example where a block-level element has a default padding of “20px,” which is overwritten at tablet to be “40px” and set back to “20px” on desktop.

Classic | Closed media query range |

The subtle difference is that the mobile-first example sets the default padding to “20px” and then overwrites it at each breakpoint, setting it three times in total. In contrast, the second example sets the default padding to “20px” and only overrides it at the relevant breakpoint where it isn’t the default value (in this instance, tablet is the exception).

The goal is to:

- Only set styles when needed.

- Not set them with the expectation of overwriting them later on, again and again.

To this end, closed media query ranges are our best friend. If we need to make a change to any given view, we make it in the CSS media query range that applies to the specific breakpoint. We’ll be much less likely to introduce unwanted alterations, and our regression testing only needs to focus on the breakpoint we have actually edited.

Taking the above example, if we find that .my-block spacing on desktop is already accounted for by the margin at that breakpoint, and since we want to remove the padding altogether, we could do this by setting the mobile padding in a closed media query range.

.my-block { @media (max-width: 767.98px) { padding: 20px; } @media (min-width: 768px) and (max-width: 1023.98px) { padding: 40px; }}The browser default padding for our block is “0,” so instead of adding a desktop media query and using unset or “0” for the padding value (which we would need with mobile-first), we can wrap the mobile padding in a closed media query (since it is now also an exception) so it won’t get picked up at wider breakpoints. At the desktop breakpoint, we won’t need to set any padding style, as we want the browser default value.

separating the CSS from combining it

Back in the day, keeping the number of requests to a minimum was very important because the browser's concurrent request limit (typically around six ) was high. As a consequence, the use of image sprites and CSS bundling was the norm, with all the CSS being downloaded in one go, as one stylesheet with highest priority.

With HTTP/2 and HTTP/3 now on the scene, the number of requests is no longer the big deal it used to be. This enables us to use a media query to break CSS into multiple files. The obvious benefit of this is that the browser can now request the CSS it currently requires with a higher priority than the CSS it doesn't. This increases the speed at which pages are rendered more efficiently.

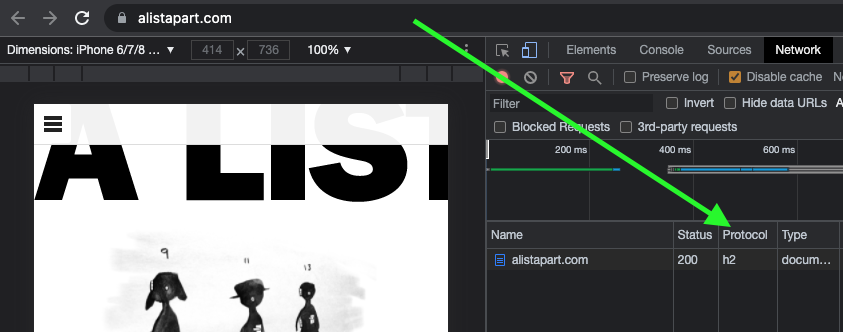

Which HTTP version are you using?

To determine which version of HTTP you're using, go to your website and open your browser's dev tools. Next, go to the Network tab and check whether the Protocol column is visible. If "h2" is listed under Protocol, it means HTTP/2 is being used.

Note: to view the Protocol in your browser's dev tools, go to the Network tab, reload your page, right-click any column header ( e. g., Name ), and check the Protocol column.

Also, if your site is still using HTTP/1... WHY?!! What are you anticipating? Excellent user support exists for HTTP/2.

Splitting the CSS

Separating the CSS into individual files is a worthwhile task. Linking the separate CSS files using the relevant media attribute allows the browser to identify which files are needed immediately (because they’re render-blocking) and which can be deferred. Based on this, it allocates each file an appropriate priority.

We can see that the mobile and default CSS are loaded with" Highest" priority in the following example of a website that is visited on a mobile breakpoint, since they are currently required to render the page. The remaining CSS files ( print, tablet, and desktop ) are still downloaded in case they'll be needed later, but with" Lowest" priority.

Before rendering can begin, the browser will need to download the CSS file and parse it using bundled CSS before rendering can begin.

While, as noted, with the CSS separated into different files linked and marked up with the relevant media attribute, the browser can prioritize the files it currently needs. Using closed media query ranges allows the browser to do this at all widths, as opposed to classic mobile-first min-width queries, where the desktop browser would have to download all the CSS with Highest priority. We can’t assume that desktop users always have a fast connection. For instance, in many rural areas, internet connection speeds are still slow.

Depending on project requirements, the media queries and the number of separate CSS files will vary from project to project, but the example below might look similar.

Bundled CSS This single file contains all the CSS, including all media queries, and it will be downloaded with Highest priority. | Separated CSS Separating the CSS and specifying a |

Depending on the project’s deployment strategy, a change to one file (mobile.css, for example) would only require the QA team to regression test on devices in that specific media query range. Compare that to the prospect of deploying the single bundled site.css file, an approach that would normally trigger a full regression test.

Moving on

The adoption of mobile-first CSS was a significant milestone in web development because it allowed front-end developers to concentrate on mobile web applications rather than creating websites for desktop use and attempting to retrofit them to work on other devices.

I don't think anyone wants to return to that development model again, but it's important we don't lose sight of the issue it highlighted: that things can easily get convoluted and less efficient if we prioritize one particular device—any device—over others. For this reason, focusing on the CSS in its own right, always mindful of what is the default setting and what's an exception, seems like the natural next step. I've started to notice subtle simplifications in both the CSS and other developers', and that the work is also a little more organized and effective.

In the end, simplifying CSS rule creation whenever possible is a more effective strategy than circling around with overrides. But whichever methodology you choose, it needs to suit the project. Mobile-first may—or may not—turn out to be the best choice for what's involved, but first you need to solidly understand the trade-offs you're stepping into.