” Any feedback?” is perhaps one of the worst ways to ask for opinions. It’s obscure and unreliable, and it doesn’t give a clear picture of what we’re looking for. Getting good opinions starts sooner than we might hope: it starts with the demand.

Starting the process of receiving feedback with a question may seem counterintuitive, but it makes sense if we consider that receiving feedback can be seen as a form of pattern research. In the same way that we wouldn’t perform any studies without the correct questions to get the insight that we need, the best way to ask for feedback is also to build strong issues.

Design criticism is not a one-time procedure. Sure, any great comments process continues until the project is finished, but this is especially true for layout because architecture work continues iteration after iteration, from a high level to the finest details. Each stage requires its unique set of questions.

And suddenly, as with any great research, we need to review what we got up, get to the base of its perspectives, and take activity. Problem, generation, and analysis. This look at each of those.

The query

Being available to input is important, but we need to be specific about what we’re looking for. Any comments,” What do you think,” or” I’d love to hear your opinion” at the conclusion of a presentation are likely to generate a lot of divergent ideas, or worse, to make people follow the lead of the first speaker. And next… we get frustrated because vague issues like those you turn a high-level moves review into folks rather commenting on the borders of buttons. Which topic may be a wholesome one, so it might be difficult to get the team to switch to the subject you wanted to concentrate on.

But how do we get into this scenario? It’s a combination of various aspects. One is that we don’t often consider asking as a part of the input approach. Another is how healthy it is to assume that everyone else will agree with the problem and leave it alone. Another is that in nonprofessional conversations, there’s usually no need to be that exact. In summary, we tend to undervalue the value of the issues, and we don’t make any improvements to them.

The work of asking good questions guidelines and focuses the criticism. It also serves as a form of acceptance, outlining your willingness to make remarks and the types of comments you want to receive. It puts people in the right emotional state, especially in situations when they weren’t expecting to give opinions.

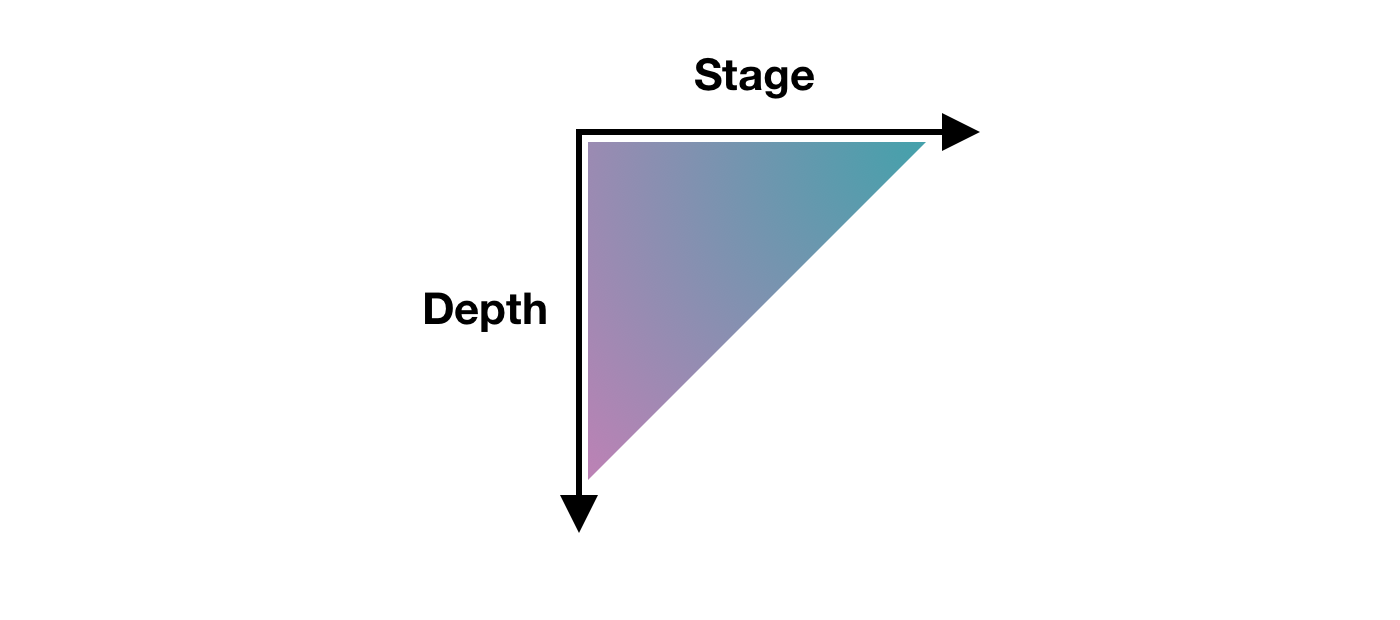

There isn’t a second best way to ask for opinions. It simply needs to be certain, and precision may take several shapes. The level than depth model for design critique has been a particularly helpful tool for my coaching.

” Stage” refers to each of the actions of the process—in our event, the design process. The type of input changes as the customer research moves forward to the final design. But within a single stage, one might also examine whether some assumptions are correct and whether there’s been a suitable language of the amassed opinions into updated designs as the job has evolved. The layers of user experience could serve as a starting point for potential questions. What do you want to know: Project objectives? user requirements? Functionality? the content Interaction design? Information architecture UI design? Navigation planning? Visual design? branding?

Here’re a few example questions that are precise and to the point that refer to different layers:

- Functionality: Is it desirable to automate account creation?

- Interaction design: Take a look through the updated flow and let me know whether you see any steps or error states that I might’ve missed.

- Information architecture: On this page, we have two competing pieces of information. Is the structure effective in communicating them both?

- User interface design: What do you think about the top-most error counter, which ensures that you can see the next error even when the error is outside the viewport?

- Navigation design: From research, we identified these second-level navigation items, but once you’re on the page, the list feels too long and hard to navigate. Do you have any suggestions for how to handle this?

- Visual design: Are the sticky notifications in the bottom-right corner visible enough?

How much of a presentation’s depth would be on the other axis of specificity. For example, we might have introduced a new end-to-end flow, but there was a specific view that you found particularly challenging and you’d like a detailed review of that. This can be especially helpful from one iteration to the next when it’s crucial to highlight the areas that have changed.

There are other things that we can consider when we want to achieve more specific—and more effective—questions.

A quick fix is to get rid of the generic qualifiers from questions like “good,” “well,” “nice,” “bad,” “okay,” and” cool.” For example, asking,” When the block opens and the buttons appear, is this interaction good”? is it possible to look specific, but you can spot the “good” qualifier and make the question” When the block opens and the buttons appear, is it clear what the next action is” look like?

Sometimes we actually do want broad feedback. Although that’s uncommon, it can occur. In that sense, you might still make it explicit that you’re looking for a wide range of opinions, whether at a high level or with details. Or perhaps you should just say,” At first glance, what do you think”? so that it’s clear that what you’re asking is open ended but focused on someone’s impression after their first five seconds of looking at it.

Sometimes the project is particularly broad, and some areas may have already been thoroughly explored. In these situations, it might be useful to explicitly say that some parts are already locked in and aren’t open to feedback. Although it’s not something I’d recommend in general, I’ve found it helpful in avoiding getting back into rabbit holes like those that could lead to even more refinement if what’s important right now isn’t.

Asking specific questions can completely change the quality of the feedback that you receive. People with less refined criticism will now be able to provide more actionable feedback, and even expert designers will appreciate the clarity and effectiveness gained from concentrating solely on what’s needed. It can save a lot of time and frustration.

The iteration

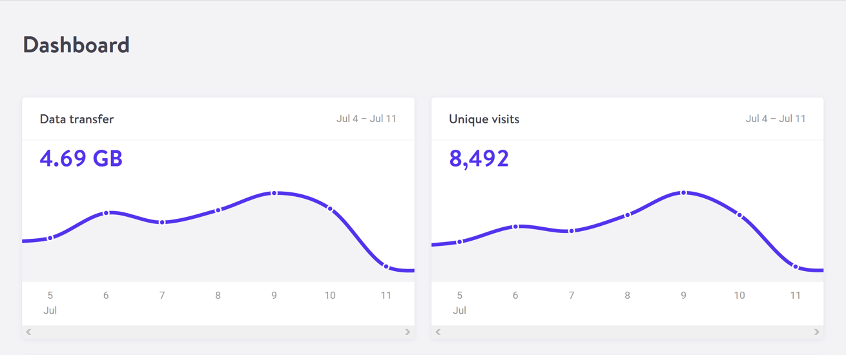

Design iterations are probably the most visible part of the design work, and they provide a natural checkpoint for feedback. Many design tools have inline commenting, but many of them only display changes as a single fluid stream in the same file. In addition, these kinds of design tools automatically update shared UI components, make conversations disappear and require designs to always display the most recent version, unless these would-be useful features were manually disabled. The implied goal that these design tools seem to have is to arrive at just one final copy with all discussions closed, probably because they inherited patterns from how written documents are collaboratively edited. That approach to design critiques is probably not the best approach, but some teams might benefit from it even if I don’t want to be too prescriptive.

The asynchronous design-critique approach that I find most effective is to create explicit checkpoints for discussion. For this, I’ll use the term iteration post. It refers to a write-up or presentation of the design iteration followed by a discussion thread of some kind. Any platform that can accommodate this type of structure can use this. By the way, when I refer to a “write-up or presentation“, I’m including video recordings or other media too: as long as it’s asynchronous, it works.

There are many benefits to using iteration posts:

- It creates a rhythm in the design work so that the designer can review feedback from each iteration and prepare for the next.

- It makes decisions accessible for upcoming review, and conversed conversations are also always available.

- It creates a record of how the design changed over time.

- Depending on the tool, it might also make it simpler to collect and act on feedback.

These posts of course don’t mean that no other feedback approach should be used, just that iteration posts could be the primary rhythm for a remote design team to use. And from there, other feedback techniques ( such as live critique, pair designing, or inline comments ) can emerge.

I don’t think there’s a standard format for iteration posts. However, there are a few high-level elements that make sense to include as a baseline:

- The goal

- The layout

- The list of changes

- The querys

Each project is likely to have a goal, and hopefully it’s something that’s already been summarized in a single sentence somewhere else, such as the client brief, the product manager’s outline, or the project owner’s request. In every iteration post, I would copy and paste this, so I could do it again. The idea is to provide context and to repeat what’s essential to make each iteration post complete so that there’s no need to find information spread across multiple posts. The most recent iteration post will have everything I need if I want to know about the most recent design.

This copy-and-paste part introduces another relevant concept: alignment comes from repetition. Therefore, repeating information in posts helps to ensure that everyone is on the same page.

The design is then the actual series of information-architecture outlines, diagrams, flows, maps, wireframes, screens, visuals, and any other kind of design work that’s been done. It’s any design object, to put it briefly. For the final stages of work, I prefer the term blueprint to emphasize that I’ll be showing full flows instead of individual screens to make it easier to understand the bigger picture.

Because it makes it easier to refer to the objects, it might also be helpful to have clear names on them. Write the post in a way that helps people understand the work. It’s not much different from creating a strong live presentation.

For an efficient discussion, you should also include a bullet list of the changes from the previous iteration to let people focus on what’s new, which can be especially useful for larger pieces of work where keeping track, iteration after iteration, could become a challenge.

Finally, as mentioned earlier, a list of the questions must be included in order to help you guide the design critique in the desired direction. Doing this as a numbered list can also help make it easier to refer to each question by its number.

Not every iteration is the same. Earlier iterations don’t need to be as tightly focused—they can be more exploratory and experimental, maybe even breaking some of the design-language guidelines to see what’s possible. Then, later, the iterations begin coming to a decision and improving it until the feature development is complete.

I want to highlight that even if these iteration posts are written and conceived as checkpoints, by no means do they need to be exhaustive. A post might be a draft, just a concept to start a discussion, or it might be a cumulative list of all the features that have been added over the course of each iteration until the full picture is achieved.

Over time, I also started using specific labels for incremental iterations: i1, i2, i3, and so on. Although this may seem like a minor labeling tip, it can be useful in many ways:

- Unique—It’s a clear unique marker. Everyone knows where to go to review things, and it’s simple to say” This was discussed in i4″ with each project.

- Unassuming—It works like versions ( such as v1, v2, and v3 ) but in contrast, versions create the impression of something that’s big, exhaustive, and complete. Attempts must be exploratory, incomplete, or partial.

- Future proof—It resolves the “final” naming problem that you can run into with versions. No more files with the title “final final complete no-really-its-done” Within each project, the largest number always represents the latest iteration.

The wording release candidate (RC ) could be used to indicate when a design is finished enough to be worked on, even if there are some bits that still need work and, in turn, need more iterations:” with i8 we reached RC” or “i12 is an RC” to illustrate this.

The review

What typically occurs during a design critique is an open discussion, with a back and forth between parties that can be very productive. This approach is particularly effective during live, synchronous feedback. However, using a different approach when we work asynchronously is more effective: adopting a user-research mindset. Written feedback from teammates, stakeholders, or others can be treated as if it were the result of user interviews and surveys, and we can analyze it accordingly.

Asynchronous feedback is particularly effective because of this shift, especially around these friction points:

- It removes the pressure to reply to everyone.

- It lessens the annoyance caused by swoop-by comments.

- It lessens our personal stake.

The first friction is being forced to respond to every comment. Sometimes we write the iteration post, and we get replies from our team. It’s just a few of them, it’s simple, and there isn’t much to worry about. But other times, some solutions might require more in-depth discussions, and the amount of replies can quickly increase, which can create a tension between trying to be a good team player by replying to everyone and doing the next design iteration. If the respondent is a stakeholder or a person directly involved in the project, this might be especially true. We need to accept that this pressure is absolutely normal, and it’s human nature to try to accommodate people who we care about. When we treat a design critique more like user research, we realize that we don’t need to respond to every comment, and there are alternatives: In asynchronous spaces, responding to all comments can be effective.

- One is to let the next iteration speak for itself. When the design changes and we publish a follow-up iteration, that’s the response. You might tag all the people who were involved in the previous discussion, but even that’s a choice, not a requirement.

- Another option is to respond politely to acknowledge each comment, such as” Understood. Thank you”,” Good points— I’ll review”, or” Thanks. These will be included in the upcoming iteration. In some cases, this could also be just a single top-level comment along the lines of” Thanks for all the feedback everyone—the next iteration is coming soon”!

- One more thing is to quickly summarize the comments before proceeding. Depending on your workflow, this can be particularly useful as it can provide a simplified checklist that you can then use for the next iteration.

The swoop-by comment, which is the kind of feedback that comes from a member of a team or non-project who might not be aware of the context, restrictions, decisions, or requirements, or of the discussions from earlier iterations, is the second friction point. On their side, there’s something that one can hope that they might learn: they could start to acknowledge that they’re doing this and they could be more conscious in outlining where they’re coming from. It can be annoying to have to repeat the same response repeatedly in swoop-by comments.

Let’s begin by acknowledging again that there’s no need to reply to every comment. However, if responding to a previously litigated point is useful, a brief response with a link to the previous discussion for additional information is typically sufficient. Remember, alignment comes from repetition, so it’s okay to repeat things sometimes!

Swoop-by commenting has two benefits: first, they might point out something that isn’t clear, and second, they might serve as a reference point for someone who is first viewing the design. Sure, you’ll still be frustrated, but that might at least help in dealing with it.

The personal stake we might have in relation to the design could be the third friction point, which might cause us to feel defensive if the review turned out to be more of a discussion. Treating feedback as user research helps us create a healthy distance between the people giving us feedback and our ego ( because yes, even if we don’t want to admit it, it’s there ). In the end, putting everything in aggregate form helps us to prioritize our work more.

Always remember that while you need to listen to stakeholders, project owners, and specific advice, you don’t have to accept every piece of feedback. You must examine it and come to a decision that can be justified, but sometimes “no” is the best choice.

As the designer leading the project, you’re in charge of that decision. In the end, everyone has their area of specialization, and the designer has the most background and knowledge to make the best choice. And by listening to the feedback that you’ve received, you’re making sure that it’s also the best and most balanced decision.

Thanks to Mike Shelton and Brie Anne Demkiw for their contributions to the initial draft of this article.