John Jantsch’s book, Choosing the Right Marketing Practice Growth Program: A Clear Guide to Types, Fit, and Charges is available online.

Table of Contents 1TL, DR: What’s the Best Selling Program for You? 2 What Are the Benefits of a Marketing Leadership Program? ( And Do You Really Need One? ) 3Types of Marketing Programs for Consultants &, AgenciesTactical Skills Courses for Marketing Consultants: Entry-Level” Business Foundations” Programs: Workshops that last for more than a day are aggressive.: License-Based Certification Applications: Partner/Reseller Courses ( Software Ecosystems ): Masterminds &, Agency Accelerators: 4Choose the Right Marketing Program Based on Your Business ]… ]

John Jantsch’s book, Choosing the Right Marketing Practice Growth Program: A Clear Guide to Types, Fit, and Charges is available online.

You are aware of the value of staying ahead when it comes to running and expanding your marketing firm as a advertising agency owner or partial CMO.

You might be considering a marketing accreditation for consultants or a partial CMO training program to improve your skills, describe your services, and create recurring revenue

It’s easy to feel overwhelmed, however, with so many” courses”, “certifications,” and “accelerators” available. How do you know which plan is best for your objectives, and what kind of investment makes sense? In this article, we’ll answers those issues with the clarity you deserve.  ,

The key is finding a marketing company education system that aligns with your business goals. This link will explain your choices in a clear, understandable way, whether you’re looking for a quick certification or a complete system to enhance your company.  ,

We’ll break down the types of courses available, who they’re best for, and normal cost varies so you can make an informed choice. Ponder this a straightforward manual to find the best solution for your needs.

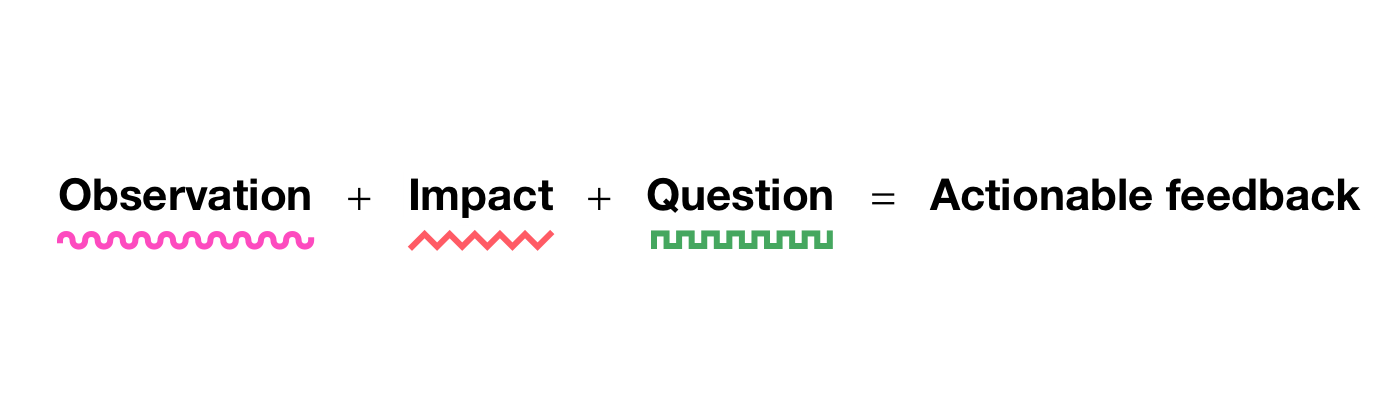

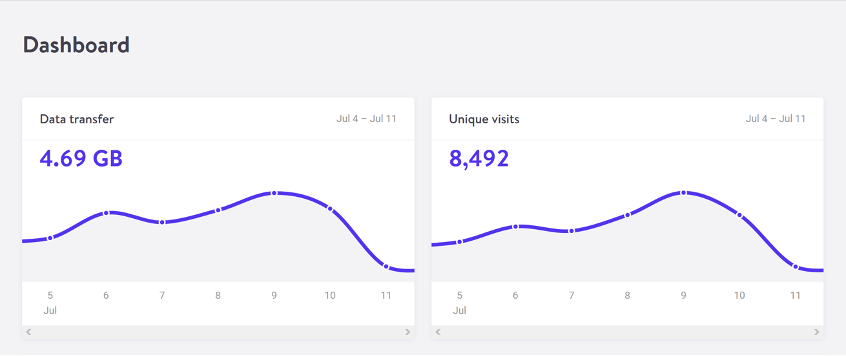

In a rush? A nice comparison of the different types of marketing leadership certifications can be found below, including format, support, and cost.  ,

What’s the Best Selling Software for You, Let’s Talk?

To make it easier to scan, here’s a comparison table summarizing these software forms, their formats, help levels, and normal cost ranges:

|

Software Type |

Format and Content |

Help Stage |

Standard Prices |

|---|---|---|---|

|

Tactical Skills Courses |

Self-paced online tutorials or short workshops focusing on a single skill ( SEO, ads, etc. ) are available. |

Low – generally DIY learning ( maybe forums or simple Q&, A) |

Free –$ 500 ( many free tool certifications, paid courses typically for less than$ 500 ) |

|

Entry-Level Foundation Courses |

Multi-week online course or group coaching for new freelancers/consultants ( covers business setup, pricing, basic marketing ) |

Moderate – party calls, peer groups, themes for the fundamentals |

~$ 500 –$ 3, 000 ( depending on program length and mentorship provided ) |

|

2–5 day intensive (virtual or in-person ) on strategy or specific advanced topic, often interactive |

Great during event – live training, interactive exercises, some provide post-workshop community |

$ 5, 000 –$ 10, 000 ( short local classes on the low end, premium multi-day bootcamps on the high end ) |

|

|

License/Certification Plans |

Comprehensive training in a proprietary system that includes frameworks, tools/templates, and ongoing training ( often weeks or an intensive kickoff ). |

High – initial deep-dive training + ongoing support ( coaching calls, updates ) and private network of fellow licensees |

~$ 5, 000 –$ 15, 000 (one-time or quarterly ) for a complete system and certification ( significant, but includes a ready-made methodology and assets ) |

|

Partner/Reseller Courses |

You become a licensed partner or reseller of that item if the teaching is focused on a particular software or platform. |

Moderate – support from vendor ( sales materials, tech support ), peer partner forums |

Free or low cost to join, but requires investment in the platform ( e. g. buying software licenses, which might be$ 300+/mo or meeting sales quotas to remain active ) |

|

Masterminds Accelerator Made For You |

Ongoing program ( 6–12+ months, often renewable yearly ) with group coaching, mastermind meetings, and sometimes live retreats |

Very regular instruction, peer accountability, and occasionally 1: 1 mentoring, access to a select group of successful peers, |

$ 10, 000 –$ 50, 000+ per year ( significant investment for mature businesses ready to scale ) |

( Note: These rate ranges are large statistics. A big-name mastermind might run even higher than the suggested” courses,” such as a$ 20 Udemy special or a$ 1,000 professional course. Constantly check what’s included and the period when comparing prices. )

As you can see, each kind of software has a different goal. The best choice depends on the problem you’re trying to solve or the target you’re aiming for. In the next part, we’ll image common goals to the plan type that often fits best. Get the scenario that sounds right to you, and consider which one of the options might be the best fit for you.

Why Acquire a Marketing Leadership Program? ( And Do You Really Need One? )

Running a advertising agency or firm is difficult. You’re trying to stand out from the competition while juggling consumer deliverables and aiming for predictable, stable income. It’s no question some company owners seek out marketing business coaching or qualifications to gain an edge. Here are a few popular reasons you might be exploring these plans:

- You want a tried-and-true program; perhaps you think your firm’s procedures are everywhere. A good teaching program—especially one rooted in a strategy-first you streamline your company’s operations. Designs, tools, and systems that can be used right away are frequently included in these marketing training programs for agencies.

- You want to level and create recurring revenue – Project work and one-off commitments make it tough to projected income. The right system can show you how to deal deposit offers or continued strategy services, creating secure monthly income.

- You must stand out from the competition for your organization. It signals to clients that you follow a reputable, established approach ( not just winging it ).

- You’re looking for credibility and confidence. Perhaps you’ve primarily carried out marketing campaigns before taking on a leadership position ( as an company CEO or partial CMO). A marketing command accreditation can boost your confidence and authority to engage higher-value customers as a true corporate consultant.

- You don’t want to “go it alone” – Being a solo consultant or small agency owner can be isolating. Many programs provide community, coaching, or mentoring so you can learn from other people and learn from them as opposed to trying to figure everything out on your own.

Honest insight: You can succeed without any formal certification – plenty of agencies grow through trial and error. However, a well-organized training program for marketing consultants can significantly shorten the time it takes to learn. Rather than spending years cobbling together processes, you could adopt a ready-made system in a matter of weeks. The result? saved time, fewer errors, and potential faster growth and ROI.

If any of those reasons resonate with you, it’s worth examining the different marketing agency certification and training options available. The most expensive or prestigious option may not always be the best option for your business, but not all programs are created equal. In the next sections, we’ll break down the main types of programs and what to expect from each.

Marketing Strategies for Advisors and allied organizations of all kinds

Not all marketing training or growth programs are created equal. Some are as straightforward as a self-paced online course you can pass in a weekend, while others are priceless masterminds that demand serious commitment ( and cash ). Here are some common program types you’ll come across, along with what they generally involve:

Tactical Skills Courses for Marketing Consultants:

focused courses on particular marketing skills ( SEO, Google Ads, email marketing, etc. ). Usually, on-demand video lessons or workshops teaching you how to do a particular thing. Little to no personalized support beyond maybe a forum or basic Q&, A. Great for quickly acquiring a new skill or certification.  ,

Cost is frequently low ( many are free or under$ 100 ). Check out Google’s Marketing Trainings  , or LinkedIn Learning.

Entry-Level” Company Foundations” Plans:

Introductory programs for freelancers or new solo-agency owners covering business basics. They might teach you how to set your pricing, find your first clients, and avoid common mistakes for newcomers. Format can be a short cohort-based course or coaching group. Support is moderate ( e .g., group calls or online communities ).  ,

Cost: ranges widely from a few hundred to a few thousand dollars depending on depth ( some may start around$ 500 and go up to a few thousand dollars for multi-week coaching ).

Workshops that last for more than a day are aggressive.:

Short-term, immersive trainings ( often lasting 2 to 5 days ), typically with a live or in-person component, that cover strategy and best practices. These often include interactive sessions and networking with peers. During the event, there is a lot of support ( hands-on instruction, hot seats, etc. ). ), and sometimes you get access to a community or follow-up resources for accountability.  ,

Cost: Typically in the mid-range, many intensives cost between$ 5, 000 and$ 10, 000 depending on the facilitator’s length and reputation ( some local workshops might be on the low end, while well-known strategy bootcamps can cost several thousand ).

License-Based Certification Applications:

, Comprehensive programs that teach you a proven system or methodology for marketing ( often a strategy-first approach ) and license you to use it with your clients. These typically include extensive training ( often an initial intensive or cohort ), libraries of tools and templates, and ongoing support like coaching calls or a private network of fellow certified professionals. It’s like getting a business-in-a-box for your consultancy: you learn the framework, get materials to implement it, and often earn an official certification.  ,

Cost: higher investment, based on the depth of the program business.com, typically between$ 5, 000 and$ 15, 000 ( some programs have one-time fees, while others have annual licensing fees or revenue share models ). In return, you gain a repeatable framework to deliver services, plus continued resources and community.

Software Ecosystems Partner/Reseller Courses:

These programs, for example, work with a CRM, marketing automation tool, or analytics software. They typically involve training on the platform and how to sell or service it, and you might get a “partner” or reseller status. The format often includes online training modules and a partner community, support comes from the vendor (account managers, sales resources, etc. ).  ,

Cost: usually low or no direct fee to join – the trade-off is you’re expected to promote that company’s software. Sometimes the cost is meeting a sales quota or purchasing the software itself ( which you might resell to clients ). In other words, the platform training might be free, but you’ll incur expenses in subscription fees or the time/effort to sell it. This path can create a nice recurring revenue stream ( commissions or margin on software subscriptions ) if you plan to build your services tightly around a particular tool.

Masterminds &, Agency Startups:

High-touch growth programs for well-established organizations or consultants. These are typically group coaching programs or mastermind groups aimed at scaling up your business ( common goals: hitting$ 1M+ revenue, building your team, improving profitability, etc. ). Formal frequently includes in-person retreats or events, regular coaching calls ( with a mentor who has been there, done that ), and peer mastermind sessions. Support level is very high – you get mentorship, accountability, and a network of other high-performing peers.  ,

Cost: significant investments that typically cost five figures per year. Many quality agency mastermind programs charge on the order of$ 10, 000 to$ 50, 000 per year ( or more for top-tier circles ). When you’re ready to put fuel on the fire and justify a sizable investment in exchange for potential significant growth increases, these are not for beginners.

Choose the Best Selling Program based on Your Business Objectives

It’s time to get personal. Think about what you really want to achieve at this stage in your business or career. Do you want to improve a particular skill set? Launch your own consultancy? Complete a new business model for your organization? Different goals call for different solutions. Let’s explore a few typical scenarios and recommend the program type that tends to be the best fit for each, along with what to expect and key pros and cons.

Goal:” I want to get better at a certain skill”.

Scenario: You want to improve one skill, such as SEO, Google Ads, or copywriting, while running or consulting an agency.

Program: Tactical Skills Courses or Certifications

Expect short, on-demand video tutorials or step-by-step workshops. Limited support – maybe forums or weekly Q&, A.

Investment: Usually free to a few hundred dollars. The amount of time commitment is short ( between a few hours and a few weeks ).

Pros: Affordable, focused, and flexible. Great for quickly filling knowledge gaps.

Cons: Narrow scope. Little business guidance or long-term support.

Skip the big programs for a single skill upgrade, though. A targeted course or certification gets the job done quickly and cheaply.

Goal:” I want to change from business to starting my personal consultancy”.

Scenario: You want to launch your own consulting or independent agency after leaving a corporate marketing position. You have the marketing skills, but not the business-building know-how.

Program: Entry-Level Foundation Courses

What to Expect: In these 4- to 12-week programs, the fundamentals of packaging services, pricing, and client finding are covered. Includes video lessons, group coaching, templates, and community support.

Depending on format and coaching, the investment range is$ 500 to$ 3,000.

Pros: Saves time and helps avoid early mistakes. Built-in support and templates fast-track setup.

Cons: Focuses on the fundamentals, not deep dives. Quality varies.

Takeaway: If you’re going out on your own, get started with a course that covers business fundamentals rather than just marketing.

” I want to switch from project-based to strategy-led servants,” the target is.

Scenario: You’re doing one-off projects and want to offer long-term strategic services instead.

Program: License-Based Certification Programs

Expect intensive instruction in a strategy framework as well as tools and templates for client delivery. Ongoing support through coaching calls and a private network.

Investment:$ 5, 000 –$ 1, 000, plus potential licensing or renewal costs.

Pros: Provides a proven system and confidence to sell strategy retainers. Includes ongoing support and tools.

Cons: High upfront costs. Requires full commitment to the framework.

Takeaway: To transition to strategy-first retainers, invest in a framework that provides you with the tools and support needed to reposition your services.

Related Articles:  ,

Goal:” I want to level my firm past$ 1M in income”.

Scenario: You’ve built a stable agency and want help breaking through growth ceilings like hiring, positioning, or systems.

Program: Accelerators or masterminds of agencies

What to Expect: Includes coaching, mastermind calls, strategic playbooks, and live events. Emphasis is on scaling operations, team, and leadership– not tactics.

Investment:$ 10, 000 –$ 50, 000 / year. May require travel and 12-month commitment.

Pros: Offers peer accountability and mentorship. Helps fast-track decisions and avoid trial-and-error.

Cons: Requires time, money, and focus to implement. Fit depends on the quality of the group and the stage alignment.

Takeaway: For established agencies ready to scale, a mastermind or accelerator provides the strategic support and peer insight needed to grow faster and smarter.

Related Articles:  ,

-

Marketing Leadership as a Service ( MLaaS )

With a few wealthy consumers, I want to work as a partial CMO.

Scenario: You want to serve a small number of clients in a part-time, high-level strategic role – without managing a big team.

Program: Fractional CMO Coaching or Strategy Certification

Expect the best possible outcomes: putting together your offer, negotiating retainer positions, and leading strategy. Delivered via coaching, peer groups, or system-based certifications.

Investments range from$ 5, 000 to$ 15, 000 for certifications to$ 800 to$ 1, 000 per month for coaching programs.

Pros: Helps position and sell premium strategic services. Often includes support, templates, and community.

Cons: Not easily scaled past your own time. May require personal brand building and network leverage.

Takeaway: A focused program will assist you in developing and selling a compelling fractional CMO offer if your goal is fewer, better-paying clients and extensive strategic work.

Related Articles:  ,

Making Your Decision: Which Marketing Training Program is right for you?

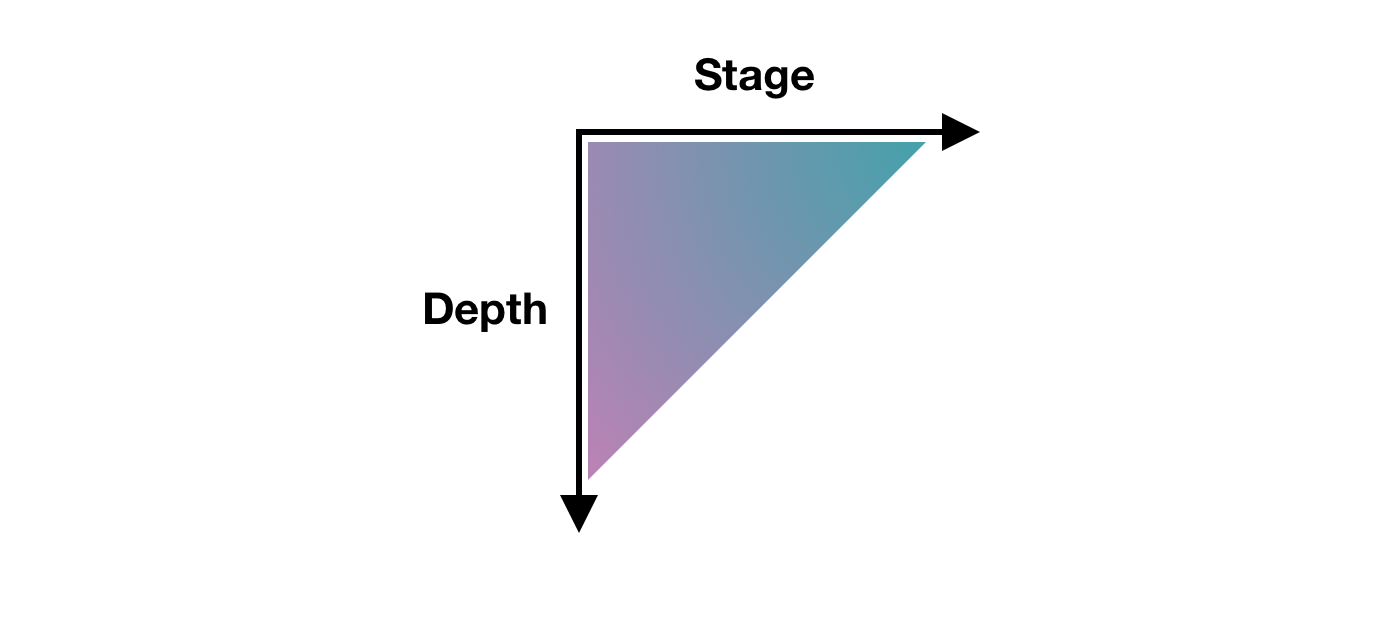

Finding the ideal program starts with matching your desired outcome and stage with the intended goal.

- If you have a single skills gap, take a course or get a specific certification ( no need to over-invest ).

- If you’re just starting out on your own, build your business fundamentals with an entry-level program or community – getting those basics right will pay off for years.

- A certification or licensing program with a proven system can change the way you approach your service offering if you need to incorporate strategy and generate recurring revenue.

- If you’re already established and aiming higher, a mastermind/accelerator can provide the mentorship and peer group to break through growth plateaus.

- Make sure you have a strategic framework and support system in place to help you succeed in the niche, whether through a certification or a peer coaching group, for those pursuing a fractional CMO model.

Finally, remember that no program is a magic bullet. Your results will depend on your effort. Even the best program in the world can be valuable if you put in the effort, and even a subpar program can be useful if you put in the effort and learn new things. Before plunking down money, get clear on what exactly you want to get out of it, and how you’ll hold yourself accountable to use what you learn.

Choose the Right Marketing Certification for Long-Term Rise

More diverse and valuable than ever is the landscape of marketing certifications and training programs for consultants, agency owners, and fractional CMOs. Whether you’re looking to master a tactical skill, adopt a proven client delivery system, or join a high-level agency mastermind, there’s a training option designed to meet your goals.

As you evaluate your next step, start with clarity: What problem are you trying to solve? The best ROI doesn’t come from the most expensive program; it comes from choosing the best one based on your business stage, growth goals, and service model. From lightweight online marketing courses to full-scale strategy certifications, every program type serves a purpose

Be strategic with your investments in education, just like you are with your business. When in doubt, lean towards programs that emphasize strategy, systems, and support/network – those elements tend to deliver enduring value. ( That might be a tiny hint from our perspective, as we strongly believe in strategy-first growth and comprehensive support, given our own experience in this space. )

We hope that the options and trade-offs have been made clearer by this breakdown. By understanding what’s out there and assessing where you want to go, you’re well on your way to making a smart decision. The right program is waiting to help you achieve your goals, whether that means mastering a new skill, signing better clients, or achieving that next revenue milestone. Good luck, and happy learning!