As a UX professional in today’s data-driven surroundings, it ’s extremely likely that you ’ve been asked to design a personal digital experience, whether it ’s a public website, person site, or local program. However while there continues to be no lack of marketing buzz around personalization systems, we also have very few defined approaches for implementing personalized UX.

That’s where we come in. After completing tens of personalisation projects over the past few years, we gave ourselves a purpose: could you make a systematic personalization platform especially for UX practitioners? The Personalization Pyramid is a designer-centric model for standing up human-centered customisation programs, spanning information, classification, content delivery, and general goals. By using this strategy, you will be able to understand the core components of a modern, UX-driven personalization system ( or at the very least understand enough to get started ).

Getting Started

For the sake of this essay, we’ll believe you’re already familiar with the basics of online personalization. A nice guide can be found these: Website Personalization Planning. While Graphic jobs in this area can take on several different forms, they usually stem from identical starting positions.

Common scenarios for starting a customisation task:

- Your organization or client purchased a content management system ( CMS ) or marketing automation platform ( MAP ) or related technology that supports personalization

- The CMO, CDO, or CIO has identified personalisation as a target

- User data is disjointed or confusing

- You are running some secluded targeting strategies or A/B tests

- Partners disagree on personalization technique

- Mission of client privacy rules ( e. g. GDPR ) requires revisiting existing user targeting practices

Regardless of where you begin, a powerful personalization system will require the same key building stones. We’ve captured these as the “levels ” on the pyramid. Whether you are a UX artist, scholar, or planner, understanding the core components may help make your contribution effective.

From top to bottom, the amounts include:

- North Star: What larger geopolitical target is driving the personalization system?

- Objectives: What are the specific, tangible benefits of the system?

- Touchpoints: Where will the personalized experience become served?

- Contexts and Campaigns: What personalization information does the person view?

- User Segments: What constitutes a special, suitable market?

- Actionable Data: What dependable and credible information is captured by our professional platform to generate personalization?

- Natural Data: What wider set of data is potentially available ( now in our environment ) allowing you to optimize?

We’ll go through each of these amounts in turn. To help make this practical, we created an following deck of cards to demonstrate specific examples from each degree. We’ve found them helpful in customisation brainstorming periods, and will include cases for you here.

Starting at the Top

The parts of the pyramids are as follows:

North Star

A northern sun is what you are aiming for total with your personalization system ( big or small ). The North Star defines the (one ) overall mission of the personalization program. What do you wish to achieve? North Stars cast a ghost. The bigger the sun, the bigger the dark. Example of North Starts may contain:

- Function: Personalize based on basic customer sources. Examples: “Raw ” notifications, basic search results, system user settings and configuration options, general customization, basic optimizations

- Feature: Self-contained personalisation componentry. Examples: “Cooked ” notifications, advanced optimizations ( geolocation ), basic dynamic messaging, customized modules, automations, recommenders

- Experience: Personal user experiences across numerous interactions and consumer flows. Example: Email activities, landing pages, sophisticated communication ( i. electronic. C2C chat ) or conversational interfaces, larger user flows and content-intensive optimizations ( localization ).

- Solution: Highly differentiating customized product experiences. Example: Standalone, branded encounters with personalization at their base, like the “algotorial” songs by Spotify quite as Discover Weekly.

Goals

As in any good UX design, personalization can help accelerate designing with customer intentions. Goals are the tactical and measurable metrics that will prove the overall program is successful. A good place to start is with your current analytics and measurement program and metrics you can benchmark against. In some cases, new goals may be appropriate. The key thing to remember is that personalization itself is not a goal, rather it is a means to an end. Common goals include:

- Conversion

- Time on task

- Net promoter score ( NPS)

- Customer satisfaction

Touchpoints

Touchpoints are where the personalization happens. As a UX designer, this will be one of your largest areas of responsibility. The touchpoints available to you will depend on how your personalization and associated technology capabilities are instrumented, and should be rooted in improving a user’s experience at a particular point in the journey. Touchpoints can be multi-device ( mobile, in-store, website ) but also more granular ( web banner, web pop-up etc. ). Here are some examples:

Channel-level Touchpoints

- Email: Role

- Email: Time of open

- In-store display ( JSON endpoint )

- Native app

- Search

Wireframe-level Touchpoints

- Web overlay

- Web alert bar

- Web banner

- Web content block

- Web menu

If you’re designing for web interfaces, for example, you will likely need to include personalized “zones ” in your wireframes. The content for these can be presented programmatically in touchpoints based on our next step, contexts and campaigns.

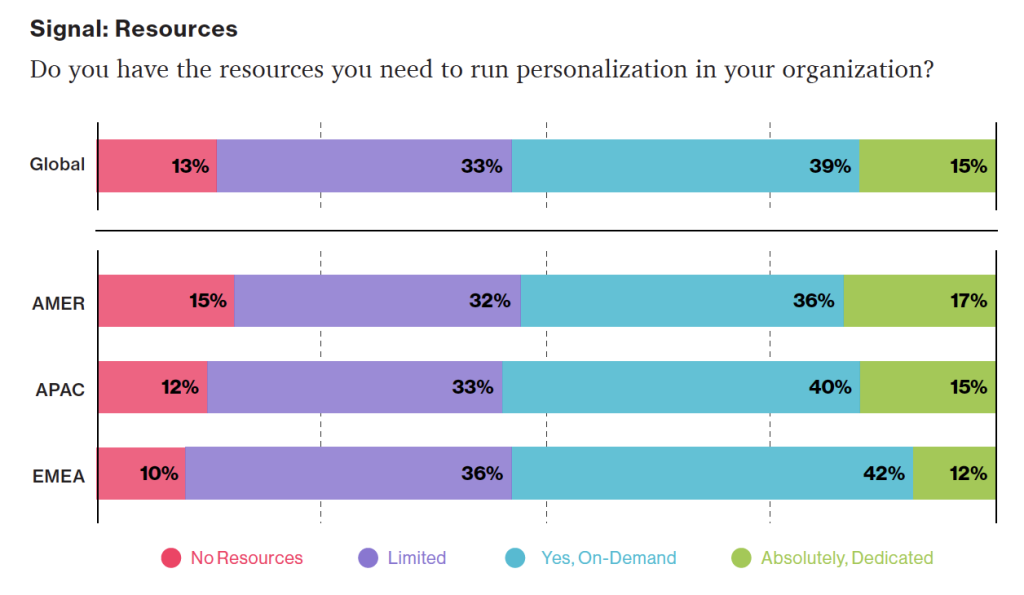

Source: “Essential Guide to End-to-End Personaliztion ” by Kibo.

Contexts and Campaigns

Once you ’ve outlined some touchpoints, you can consider the actual personalized content a user will receive. Many personalization tools will refer to these as “campaigns ” ( so, for example, a campaign on a web banner for new visitors to the website ). These will programmatically be shown at certain touchpoints to certain user segments, as defined by user data. At this stage, we find it helpful to consider two separate models: a context model and a content model. The context helps you consider the level of engagement of the user at the personalization moment, for example a user casually browsing information vs. doing a deep-dive. Think of it in terms of information retrieval behaviors. The content model can then help you determine what type of personalization to serve based on the context ( for example, an “Enrich ” campaign that shows related articles may be a suitable supplement to extant content ).

Personalization Context Model:

- Browse

- Skim

- Nudge

- Feast

Personalization Content Model:

- Alert

- Make Easier

- Cross-Sell

- Enrich

We’ve written extensively about each of these models elsewhere, so if you’d like to read more you can check out Colin’s Personalization Content Model and Jeff’s Personalization Context Model.

User Segments

User segments can be created prescriptively or adaptively, based on user research ( e. g. via rules and logic tied to set user behaviors or via A/B testing ). At a minimum you will likely need to consider how to treat the unknown or first-time visitor, the guest or returning visitor for whom you may have a stateful cookie ( or equivalent post-cookie identifier ), or the authenticated visitor who is logged in. Here are some examples from the personalization pyramid:

- Unknown

- Guest

- Authenticated

- Default

- Referred

- Role

- Cohort

- Unique ID

Actionable Data

Every organization with any digital presence has data. It’s a matter of asking what data you can ethically collect on users, its inherent reliability and value, as to how can you use it ( sometimes known as “data activation. ” ) Fortunately, the tide is turning to first-party data: a recent study by Twilio estimates some 80 % of businesses are using at least some type of first-party data to personalize the customer experience.

First-party data represents multiple advantages on the UX front, including being relatively simple to collect, more likely to be accurate, and less susceptible to the “creep factor” of third-party data. So a key part of your UX strategy should be to determine what the best form of data collection is on your audiences. Here are some examples:

There is a progression of profiling when it comes to recognizing and making decisioning about different audiences and their signals. It tends to move towards more granular constructs about smaller and smaller cohorts of users as time and confidence and data volume grow.

While some combination of implicit / explicit data is generally a prerequisite for any implementation ( more commonly referred to as first party and third-party data ) ML efforts are typically not cost-effective directly out of the box. This is because a strong data backbone and content repository is a prerequisite for optimization. But these approaches should be considered as part of the larger roadmap and may indeed help accelerate the organization’s overall progress. Typically at this point you will partner with key stakeholders and product owners to design a profiling model. The profiling model includes defining approach to configuring profiles, profile keys, profile cards and pattern cards. A multi-faceted approach to profiling which makes it scalable.

Pulling it Together

While the cards comprise the starting point to an inventory of sorts ( we provide blanks for you to tailor your own ), a set of potential levers and motivations for the style of personalization activities you aspire to deliver, they are more valuable when thought of in a grouping.

In assembling a card “hand”, one can begin to trace the entire trajectory from leadership focus down through a strategic and tactical execution. It is also at the heart of the way both co-authors have conducted workshops in assembling a program backlog—which is a fine subject for another article.

In the meantime, what is important to note is that each colored class of card is helpful to survey in understanding the range of choices potentially at your disposal, it is threading through and making concrete decisions about for whom this decisioning will be made: where, when, and how.

Lay Down Your Cards

Any sustainable personalization strategy must consider near, mid and long-term goals. Even with the leading CMS platforms like Sitecore and Adobe or the most exciting composable CMS DXP out there, there is simply no “easy button ” wherein a personalization program can be stood up and immediately view meaningful results. That said, there is a common grammar to all personalization activities, just like every sentence has nouns and verbs. These cards attempt to map that territory.