Imagine this: Two people are conversing in what appears to be the same pattern issue in a conference room at your software company. One is talking about whether the staff has the proper skills to handle it. The various examines whether the answer really addresses the user’s issue. Similar place, the same issue, and entirely different perspectives.

This is the lovely, sometimes messy fact of having both a Design Manager and a Guide Designer on the same group. And if you’re wondering how to make this job without creating confusion, coincide, or the feared” to some cooks” situation, you’re asking the right issue.

Fresh lines on an organizational chart have always been the standard solution. The Design Manager handles persons, the Lead Designer handles art. Problem is fixed, isn’t it? Except for fiction, fresh org charts. In fact, both roles care greatly about crew health, style quality, and shipping great work.

When you begin to think of your style organization as a style organism, the magic happens when you accept collide rather than fight it.

The biology of a good design team

Here’s what I’ve learned from years of being on both flanks of this formula: think of your design team as a living organism. The Design Manager concentrates on the internal security, career advancement, team dynamics, and other factors. The Lead Designer is more focused on the body ( the handiwork, the design standards, the hands-on projects that are delivered to users ).

But just like mind and body aren’t totally separate systems, but, also, do these tasks overlap in significant ways. Without working in harmony with one another, you didn’t have a healthier person. The technique is to recognize those overlaps and how to understand them gently.

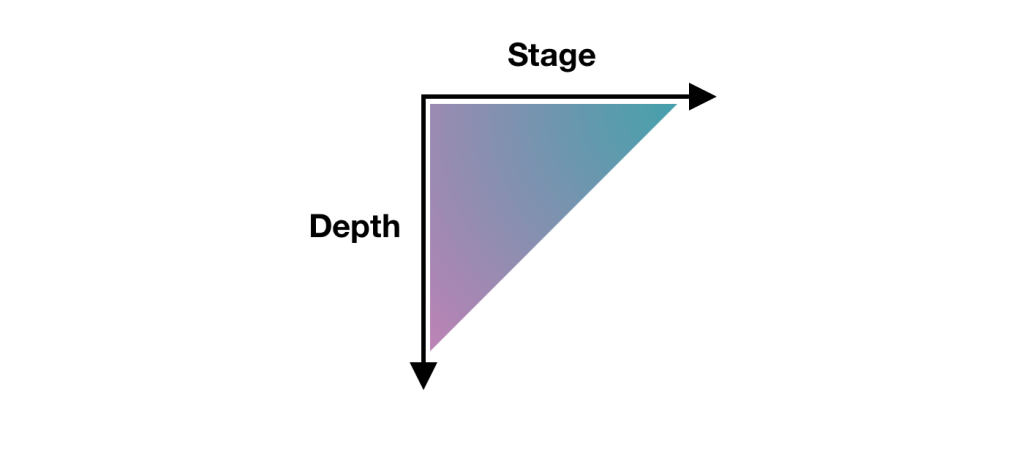

When we look at how good team really function, three critical devices emerge. Each requires the collaboration of both jobs, but one must assume the lead role in maintaining that system sturdy.

Folks & Psychology: The Nervous System

Major caretaker: Design Manager

Supporting duties: Guide Custom

The anxious system is all about mental health, feedback, and signals. When this technique is good, information flows easily, people feel safe to take risks, and the staff may react quickly to new problems.

The main caregiver is around, the Design Manager. They are keeping track of the team’s emotional state, making sure feedback loops are healthier, and creating the environment for growth. They’re hosting job meetings, managing task, and making sure no single burns out.

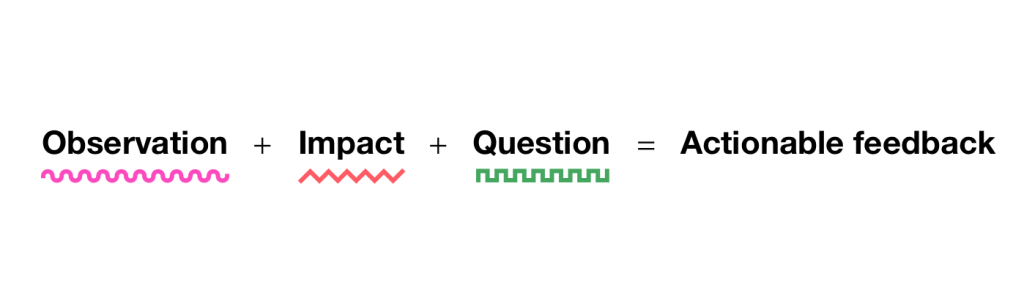

However, the Lead Designer has a vital enabling position. They’re offering visual feedback on build development needs, identifying stagnant design skills in someone, and pointing out potential growth opportunities that the Design Manager might overlook.

Design Manager tends to:

- development planning and profession conversations

- internal security and dynamics of the group

- Overhead management and resource allocation

- Performance evaluations and opinions management methods

- Providing learning options

Direct Custom supports by:

- Providing craft-specific evaluation of staff member growth

- identifying opportunities for growth and style talent gaps

- Providing style mentorship and assistance

- indicating when staff members are prepared for more challenging problems.

The Muscular System: Design & Execution

Major caretaker: Lead Designer

Supporting duties: Design Manager

Power, cooperation, and skill development are the hallmarks of the skeletal system. When this technique is healthy, the team can do complicated design work with precision, maintain regular quality, and adjust their craft to fresh challenges.

The Lead Designer is in charge of everything here. They are raising the bar for quality work, providing craft instruction, and ensuring that shipping work is done to the highest standards. They’re the ones who can tell you if a design decision is sound or if we’re solving the right problem.

However, the Design Manager has a significant supporting role. They’re making sure the team has the resources and support they need to perform their best work, such as proper nutrition and time for an athlete recovering.

Lead Designer tends to:

- Definition of system usage and design standards

- Feedback on design work that meets the required standards

- Experience direction for the product

- Design choices and product-wide alignment are important.

- advancement of craft and innovation

Design Manager supports by:

- ensuring that design standards are understood and accepted by all members of the team

- Confirming that a direction of experience is being pursued

- Supporting practices and systems that scale without bottlenecking

- facilitating team-wide design alignment

- Providing resources and removing obstacles to outstanding craft work

The Circulatory System: Strategy &, Flow

Both the lead designer and the design manager were caretakers.

How do decisions, energy, and information flow through the team according to the circulatory system? When this system is healthy, strategic direction is clear, priorities are aligned, and the team can respond quickly to new opportunities or challenges.

This is the true partnership that occurs. Although both positions bring unique perspectives, keeping the circulation strong is a dual responsibility.

Lead Designer contributes:

- The product fulfills the user’s needs.

- overall experience and product quality

- Strategic design initiatives

- User needs for each initiative are based on research.

Design Manager contributes:

- Communication to team and stakeholders

- Stakeholder management and alignment

- Team accountability across all levels

- Strategic business initiatives

Both parties work together on:

- Co-creation of strategy and leadership

- Team goals and prioritization approach

- organizational structure decisions

- Success frameworks and measures

Keeping the Organism Healthy

Understanding that all three systems must work together is the key to making this partnership sing. A team with excellent craftmanship but poor psychological protection will eventually burn out. A team with great culture but weak craft execution will ship mediocre work. A team that has both but poor strategic planning will concentrate on the wrong things.

Be Specific About the System You’re Defending.

When you’re in a meeting about a design problem, it helps to acknowledge which system you’re primarily focused on. Everyone has context for their input.” I’m thinking about this from a team capacity perspective” ( nervous system ) or” I’m looking at this through the lens of user needs” ( muscular system ).

This is not about staying in your path. It’s about being transparent as to which lens you’re using, so the other person knows how to best add their perspective.

Create Positive Feedback Loops

The partnerships that I’ve seen have the most effective feedback loops between the systems:

Nervous system signals to muscular system:” The team is struggling with confidence in their design skills” → Lead Designer provides more craft coaching and clearer standards.

Nervous system receives the message” The team’s craft skills are improving more quickly than their project complexity.”

Both systems communicate to the circulatory system that” We’re seeing patterns in team health and craft development that suggest we need to adjust our strategic priorities.”

Handle Handoffs Gracefully

When something switches from one system to another, this partnership’s most crucial moments occur. This might occur when a design standard ( muscular system ) needs to be implemented across the team ( nervous system ) or when a tactical initiative ( circulatory system ) requires specific craft execution ( muscular system ).

Make these transitions explicit. The new component standards have been defined. Can you give me some ideas for how to get the team up to speed? or” We’ve agreed on this strategic direction. From here, I’ll concentrate on the specific user experience approach.

Stay original and avoid being a tourist.

The Design Manager who never thinks about craft, or the Lead Designer who never considers team dynamics, is like a doctor who only looks at one body system. Great design leadership requires both parties to be concerned with the entire organism, even when they are not the primary caregiver.

This entails asking questions rather than making assumptions. ” What do you think about the team’s craft development in this area”? or” How do you think this is affecting team morale and workload”? keeps both viewpoints present in every choice.

When the Organism Gets Sick

Even with clear roles, this partnership can go wrong. What are the most typical failure modes I’ve seen:

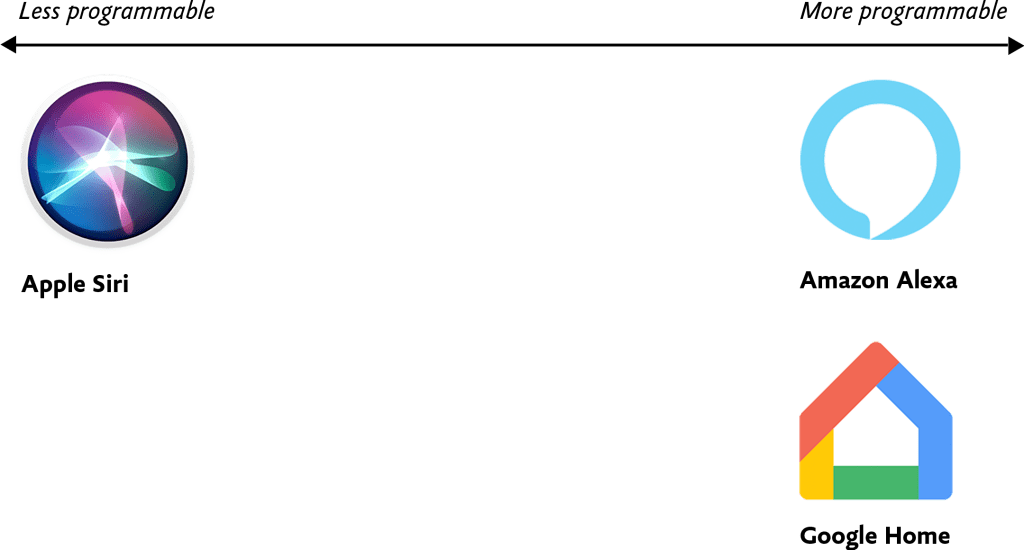

System Isolation

The design manager ignores craft development and only concentrates on the nervous system. The Lead Designer ignores team dynamics and concentrates solely on the muscular system. Both people retreat to their comfort zones and stop collaborating.

The signs: Team members receive conflicting messages, work conditions suffer, and morale declines.

Reconnect with other people’s goals in the treatment. What are you both trying to achieve? Great design work typically arrives on time from a strong team. Discover how both systems accomplish that goal.

Poor Circulation

There is no clear strategic direction, shifting priorities, or accepting responsibility for keeping information flowing.

The signs: Team members are unsure of their priorities, work is duplicated or dropped, and deadlines are missed.

The treatment: Explicitly assign responsibility for circulation. Who is communicating with whom? How frequently? What’s the feedback loop?

Autoimmune Response

The other person’s expertise makes them feel threatened. The Design Manager thinks the Lead Designer is undermining their authority. The Design Manager is allegedly misunderstanding the craft, according to the lead designer.

The signs: defensive behavior, territorial disputes, team members sucked into the middle.

The treatment: Remember that you’re both caretakers of the same organism. The entire team suffers when one system fails. The team thrives when both systems are strong.

The Payoff

Yes, communication is required for this model. Yes, it requires that both parties be able to assume full responsibility for team health. But the payoff is worth it: better decisions, stronger teams, and design work that’s both excellent and sustainable.

When both roles are well-balanced and functioning well together, you get the best of both worlds: strong people leadership and deep craft knowledge. When one person is ill, taking a vacation, or overburdened, the other can support the team’s health. When a decision requires both the people perspective and the craft perspective, you’ve got both right there in the room.

The framework has a balance, which is crucial. As your team expands, you can use the same system thinking to new problems. Need to launch a design system? Both the muscular system ( standards and implementation ), the nervous system (team adoption and change management ), and both have a tendency to circulate ( communication and stakeholder alignment ).

The End result

The relationship between a Design Manager and Lead Designer isn’t about dividing territories. It’s about multiplying impact. Magic occurs when both roles are aware that they are tending to various components of the same healthy organism.

The mind and body work together. The team receives both the craft excellence and strategic thinking they need. And most importantly, the work that is distributed to users benefits both sides.

So the next time you’re in that meeting room, wondering why two people are talking about the same problem from different angles, remember: you’re watching shared leadership in action. And if it’s functioning well, your design team’s mind and body are both strengthening.