I’ve been fascinated by shows since I was a child. I loved the figures and the excitement—but most of all the reports. I aspired to be an artist. And I believed that I’d get to do the things that Indiana Jones did and go on exciting activities. Perhaps my friends and I had movie ideas to make and sun in. But they never went any farther. However, I did end up working in user experience ( UI). Today, I realize that there’s an element of drama to UX— I hadn’t actually considered it before, but consumer analysis is story. And you must show a compelling story to entice stakeholders, such as the product team and decision-makers, to learn more in order to get the most out of consumer research.

Think of your favorite film. It more than likely follows a three-act construction that’s frequently seen in movies: the layout, the conflict, and the resolution. The second act shows what exists now, and it helps you get to know the characters and the challenges and problems that they face. The fight begins in Act 2, which introduces the issue. Here, issues grow or get worse. The decision comes in the third and final action. This is where the issues are resolved and the figures learn and change. This structure, in my opinion, is also a fantastic way to think about consumer research, and it might be particularly useful for explaining user research to others.

Use story as a framework for conducting research

It’s sad to say, but many have come to see studies as being dispensable. Research is frequently one of the first things to go when finances or deadlines are tight. Instead of investing in study, some goods professionals rely on manufacturers or—worse—their personal judgment to make the “right” options for users based on their experience or accepted best practices. That might lead to some groups getting in the way, but it’s too easy to overlook the real problems facing users. To be user-centered, this is something we really avoid. User study improves style. It keeps it on trail, pointing to problems and opportunities. Being aware of problems with your goods and taking corrective actions can help you keep ahead of your competition.

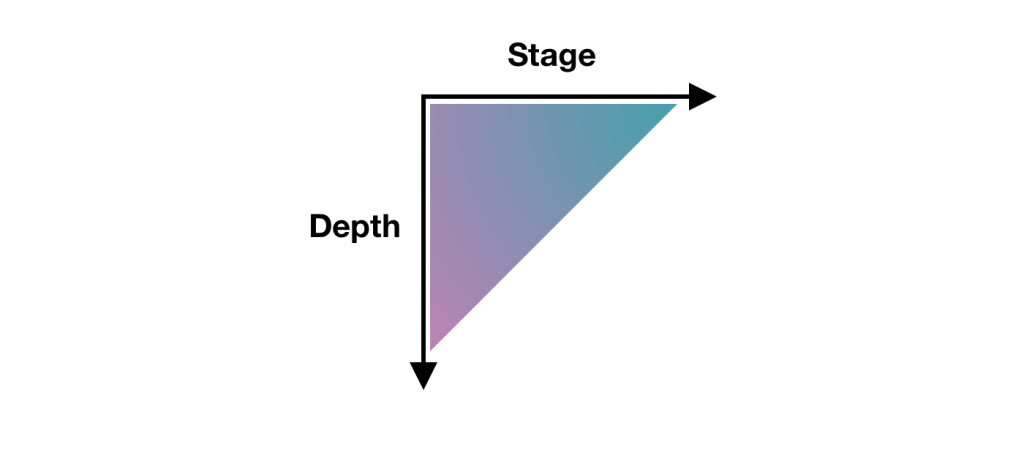

In the three-act structure, each action corresponds to a part of the process, and each part is important to telling the whole story. Let’s take a look at the various functions and how they relate to customer research.

Act one: installation

Fundamental analysis comes in handy because the layout is all about comprehending the background. Basic research ( also called relational, discovery, or preliminary research ) helps you understand people and identify their problems. You’re learning about the problems people face now, what options are available, and how those challenges impact them, just like in the films. To do basic research, you may conduct cultural inquiries or journal studies ( or both! ), which can assist you in identifying both prospects and problems. It doesn’t need to get a great investment in time or money.

What is the least sustainable ethnography that Erika Hall can do is spend fifteen minutes with a consumer and say,” Walk me through your day yesterday. That’s it. Give that one ask. Opened up and listen to them for 15 days. Do everything in your power to protect both your objectives and yourself. Bam, you’re doing ethnography”. Hall predicts that “[This ] will likely prove quite fascinating. In the very unlikely event that you didn’t learn anything new or helpful, carry on with increased confidence in your way”.

This makes sense to me in all its entirety. And I love that this makes consumer studies so visible. You can only attract participants and do it! You don’t need to create a lot of documentation. This can offer a wealth of knowledge about your customers, and it’ll help you better understand them and what’s going on in their life. That’s exactly what work one is all about: understanding where people are coming from.

Maybe Spool talks about the importance of basic research and how it really type the bulk of your research. If you can substitute what you’ve heard in the fundamental research by using more customer information that you can obtain, such as surveys or analytics, or to highlight areas that need more research. Together, all this information creates a clearer picture of the state of things and all its deficiencies. And that’s the start of a gripping tale. It’s the place in the story where you realize that the principal characters—or the people in this case—are facing issues that they need to conquer. This is where you begin to develop compassion for the characters and support their success, much like in films. And maybe partners are now doing the same. Their business may lose money because users didn’t finish particular tasks, which may be their love. Or probably they do connect with customers ‘ problems. In either case, action one serves as your main strategy for piqueing interest and investment from the participants.

When partners begin to understand the value of basic research, that is open doors to more opportunities that involve users in the decision-making approach. And that can help product teams become more user-centric. This benefits everyone—users, the product, and stakeholders. It’s similar to winning an Oscar for a film because it frequently results in a favorable and successful outcome for your product. And this can be an incentive for stakeholders to repeat this process with other products. Knowing how to tell a good story is the only way to convince stakeholders to care about doing more research, and storytelling is the key to this process.

This brings us to act two, where you iteratively evaluate a design or concept to see whether it addresses the issues.

Act two: conflict

Act two is all about digging deeper into the problems that you identified in act one. This typically involves conducting directional research, such as usability tests, where you evaluate a potential solution ( such as a design ) to see if it addresses the issues you identified. The issues could include unmet needs or problems with a flow or process that’s tripping users up. More issues will come up in the process, much like in act two of a movie. It’s here that you learn more about the characters as they grow and develop through this act.

Usability tests should typically consist of five participants, according to Jakob Nielsen, who found that that number of users can typically identify the majority of the issues:” As you add more and more users, you learn less and less because you will keep seeing the same things again and again… After the fifth user, you are wasting your time by observing the same findings repeatedly but not learning much new.”

There are parallels with storytelling here too, if you try to tell a story with too many characters, the plot may get lost. With fewer participants, each user’s struggles will be more memorable and accessible to other parties when presenting the research. This can help convey the issues that need to be addressed while also highlighting the value of doing the research in the first place.

Usability tests have been conducted in person for decades, but you can also conduct them remotely using software like Microsoft Teams, Zoom, or other teleconferencing software. This approach has become increasingly popular since the beginning of the pandemic, and it works well. You might consider in-person usability tests like watching a movie as opposed to remote testing like attending a play. There are advantages and disadvantages to each. Usability research in person is a much more valuable learning experience. Stakeholders can experience the sessions with other stakeholders. You also get real-time feedback on what they’re seeing, including surprises, disagreements, and discussions about them. Much like going to a play, where audiences get to take in the stage, the costumes, the lighting, and the actors ‘ interactions, in-person research lets you see users up close, including their body language, how they interact with the moderator, and how the scene is set up.

If conducting usability testing in the field is like watching a play that is staged and controlled, where any two sessions may be very different from one another. You can take usability testing into the field by creating a replica of the space where users interact with the product and then conduct your research there. Or you can meet users at their location to conduct your research. With either option, you get to see how things work in context, things come up that wouldn’t have in a lab environment—and conversion can shift in entirely different directions. You have less control over how these sessions end as researchers, but this can occasionally help you understand users even better. Meeting users where they are can provide clues to the external forces that could be affecting how they use your product. In-person usability tests add a level of detail that is frequently absent from remote usability tests.

That’s not to say that the “movies” —remote sessions—aren’t a good option. A wider audience can be obtained from remote sessions. They allow a lot more stakeholders to be involved in the research and to see what’s going on. Additionally, they make the doors accessible to a much wider range of users. But with any remote session there is the potential of time wasted if participants can’t log in or get their microphone working.

You can ask real users questions to understand their thoughts and understanding of the solution as a result of usability testing, whether it is done remotely or in person. This can help you not only identify problems but also glean why they’re problems in the first place. You can also test your own ideas and determine whether they are true. By the end of the sessions, you’ll have a much clearer picture of how usable the designs are and whether they work for their intended purposes. Act two is where the excitement is at the heart of the narrative, but there are also potential surprises. This is equally true of usability tests. Sometimes, participants will say unexpected things that alter the way you look at them, which can lead to unexpected turns in the story.

Unfortunately, user research is sometimes seen as expendable. Usability testing is often the only method of research that some stakeholders believe they ever need, especially in this regard. In fact, if the designs that you’re evaluating in the usability test aren’t grounded in a solid understanding of your users ( foundational research ), there’s not much to be gained by doing usability testing in the first place. Because you’re narrowing the scope of what you’re receiving feedback on without understanding the needs of the users. As a result, there’s no way of knowing whether the designs might solve a problem that users have. In the context of a usability test, it’s only feedback on a particular design.

On the other hand, if you only do foundational research, while you might have set out to solve the right problem, you won’t know whether the thing that you’re building will actually solve that. This demonstrates the value of conducting both directional and foundational research.

In act two, stakeholders will—hopefully—get to watch the story unfold in the user sessions, which creates the conflict and tension in the current design by surfacing their highs and lows. And in turn, this can encourage stakeholders to take action on the issues raised.

Act three: resolution

The third act is about resolving the issues raised by the first two acts, whereas the first two are about comprehending the context and the tensions that can compel action. While it’s important to have an audience for the first two acts, it’s crucial that they stick around for the final act. That includes all members of the product team, including developers, UX experts, business analysts, delivery managers, product managers, and any other parties who have a say in the coming development. It allows the whole team to hear users ‘ feedback together, ask questions, and discuss what’s possible within the project’s constraints. And it gives the UX design and research teams more time to clarify, suggest alternatives, or provide more context for their choices. So you can get everyone on the same page and get agreement on the way forward.

Voiceover narration of this act is typically used with audience input. The researcher is the narrator, who paints a picture of the issues and what the future of the product could look like given the things that the team has learned. They provide the stakeholders with their suggestions and direction for developing this vision.

Nancy Duarte in the Harvard Business Review offers an approach to structuring presentations that follow a persuasive story. The most effective presenters” set up a conflict that needs to be resolved” using the same methods as great storytellers, Duarte writes. ” That tension helps them persuade the audience to adopt a new mindset or behave differently”.

This type of structure aligns well with research results, and particularly results from usability tests. It provides proof for “what is “—the issues you’ve identified. And “what could be “—your recommendations on how to address them. And so forth and forth.

You can reinforce your recommendations with examples of things that competitors are doing that could address these issues or with examples where competitors are gaining an edge. Or they can be as visual as quick sketches of a potential solution to a problem. These can help generate conversation and momentum. And this continues until the session is over when you’ve concluded by bridging the gaps and offering suggestions for improvement. This is the part where you reiterate the main themes or problems and what they mean for the product—the denouement of the story. This stage provides stakeholders with the next steps and, hoped, the motivation to take those steps!

While we are nearly at the end of this story, let’s reflect on the idea that user research is storytelling. The three-act structure of user research contains all the components for a good story:

- Act one: You meet the protagonists ( the users ) and the antagonists ( the problems affecting users ). The plot begins here. In act one, researchers might use methods including contextual inquiry, ethnography, diary studies, surveys, and analytics. These techniques can produce personas, empathy maps, user journeys, and analytics dashboards as output.

- Act two: Next, there’s character development. The protagonists encounter problems and difficulties, which they must overcome, and there is conflict and tension. In act two, researchers might use methods including usability testing, competitive benchmarking, and heuristics evaluation. Usability findings reports, UX strategy documents, usability guidelines, and best practices can be included in the output of these.

- Act three: The protagonists triumph and you see what a better future looks like. Researchers may use techniques like presentation decks, storytelling, and digital media in act three. The output of these can be: presentation decks, video clips, audio clips, and pictures.

The researcher performs a number of tasks: they are the producer, the director, and the storyteller. The participants have a small role, but they are significant characters ( in the research ). And the audience are the stakeholders. But the most important thing is to get the story right and to use storytelling to tell users ‘ stories through research. By the end, the parties should leave with a goal and an eagerness to address the product’s flaws.

So the next time that you’re planning research with clients or you’re speaking to stakeholders about research that you’ve done, think about how you can weave in some storytelling. User research is ultimately a win-win situation for everyone, and all you need to do is pique stakeholders ‘ interest in how the story ends.